Once again, beautiful weather welcomed us in Redmond, when we arrived for the first Cortana Analytics workshop. A lot of people from all over the world were joining this event that was highly anticipated in the Microsoft data community.

As Codit is betting big on the new scenarios such as SaaS and Hybrid integration, API management and IoT, we really understand the real value for our customers will be gained through (Big) Data intelligence. Next to a lot of new faces, there were also quite a bit of integration companies attending the workshop, such as our partner Axon Olympus, our Columbian friends from IT Sinergy and Chris Kabat from SPR.

I will start with some impressions and opinions from our side. After that, you can find more information on some of the sessions we attended.

Key take aways.

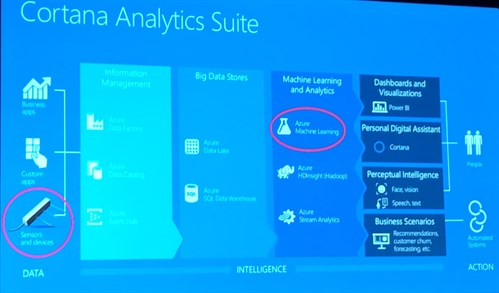

At first sight, Cortana Analytics suite is combining a lot of the existing data services that exist on Azure.

- Information management: Azure Data Catalog, Azure Event Hubs, Azure Data Factory

- Big data stores: Azure Data Lake, Azure SQL Data Warehouse

- Machine Learning and Analytics: Azure ML, Azure Stream Analytics, Azure HDInsight

- Interaction: PowerBI (dashboarding), Cortana (personal assistent), Perceptual Intelligence (Face vision, Speech test)

So far, no specific pricing information has been announced. The only thing to this regards was “One simple subscription model”.

There are a lot of choices to implement stuff on Azure Big data. And new stuff gets added frequently. It will become very important to make the right choices for the right solution.

- Will we store our data in blob storage or in HDFS (Data Lake)

- Will we leverage Spark, Storm or the easy Stream Analytics.

- How will we query data?

Keynote session

The keynote session, in a packed McKinley room, was both entertaining and informative. The event was kicked off by Corporate Vice President Jospeph Sirosh who positioned the Big Data and Analytics offering of Microsoft and Cortana Analytics. The suite should give people the answers to the typical questions such as ‘What happened?’, ‘Why did it happen?’, ‘What will happen?’, ‘What should I do?’. To answer those questions, Cortana Analytics suite gives the tools to access data, analyze it and take the decisions.

Every conference has a schema that returns in every single sessions. The schema for Cortana Analytics is the following one that shows all services that are part of the platform. (apologies for the phone picture quality)

The top differentiators for Cortana Analytics Suite

- Agility (Volume, Variety, Velocity)

- Platform (storage, compute, real time ingest, connectivity to all your data, information management, big data environments, advanced analytics, visualization, IDE’s)

- Assistive intelligence (Cortana!)

- Apps (Vertical toolkits, hosted API’s, eco system)

- Features: Elasticity, Fully managed, Economic, Open Source (R & Python)

- Facilitators

- Secure, compliant, auditable

- One simple subscription model

- Future proof, with research of MSR & Bing

Cortana Analytics should be Agile, Simple and beautiful.

A firm statement was that “if you can cook by following a recipe, you should be able to use Cortana Analytics”. While I don’t think that means we can go and fire all of our data scientists, I really believe that the technology to perform data analytics becomes more easily available and understandable for traditional developers and architects.

This is achieved through the following things.

- Fully managed cloud services

- A fully integrated platform

- Very simple to purchase

- Productize, simplify and democratize

- Partner eco system

Demos

A lot of the services were demonstrated, using some interesting and well-known examples. Especially the how-old.net demo and the underlying architecture was very interesting. That application was only possible through the tremendous scalability of Azure, and the intelligent combination of the right services on the Azure platform.

Demystifying Cortana Analytics

Jason Wilcox, Director of engineering, was up next. During a long intro on data anlytics, he mentioned the ‘process’ for data analytics as following:

- Find out what data sets are available

- Gain access to the data

- Shape the data

- Run first experiment

- Repeat steps 1, 2, 3 and 4 until you get it right

- Find the insight

- Operationalize & take action

In his talk, Jason explained the following things about Cortana Analytics

- It is a set of fully managed services (true PaaS!)

- It works with all types of data (structured and unstructured) at any scale. Azure Data Lake is a native HDFS (Hadoop File System) for the cloud. Is it integrated with HDInsight, Hortonworks and Cloudera (and more services to come). It is accessible from all HDFS compatible projects, such as Spark, Storm, Flume, Sqoop, Kafka, R, etc. And it is fully built on open standards!

- Operationalize the data through Azure Data Catalog (publish data assets) which will be integrated in Cortana Analytics

- Cortana Analytics is open to embrace and extend and allow customers to use the best-of-breed tools.

- It’s an end-to-end solution from data ingestion to presentation.

Real time data processing. How do I choose the right solution

Two Europeans from the Data Insight Global Practice (Simon Lidberg, SE and Benjamin Wright-Jones, UK) were giving an overview of the 3 major streaming analysis services, available on Azure: Azure Stream Analytics, Apache Storm and Apache Spark.

Azure Stream Analytics

Azure Stream Analytics is a known service for us and we’ve been using it for more than a year now. We also have some blog posts and talks about it. Simon was giving a high level overview of the service.

- Fully managed service: No hardware deployment

- Scalable: Dynamically scalable

- Easy development: SQL Language

- Built-in monitoring: View system performance through Azure portal

The typical Twitter sentiment demo was shown afterwards. In my opinion, Azure Stream Analytics is indeed extremely easy to get started and to build quick win scenarios on Azure for telemetry ingestion, alerting and out of the box reporting.

Storm on HDInsight

HDInsight is a streaming framework available on HDInsight. A quick overview of HDInsight was given, positioning things like Map/Reduce (Batch), Pig (Script), Hive (SQL), HBase (NoSQL) and Storm (Streaming).

This is Apache Storm

- Real time processing

- Open Source

- Visual Studi integration (C# and Java)

- Available on HDInsight

Spark on HDInsight

Spark is extremely fast (3x faster than Hadoop in 2014). Spark also unifies and combines Batch processing, Real Time processing, Stream Analytics, Machine Learning and Interactive SQL.

Spark is integrated very well with the Azure platform. There is an out of the box PowerBI connector and there is also support for direct integration with Azure Event Hubs.

The differentiators for Spark were described as follows:

- Enterprise Ready (fully managed service)

- Streaming Capabilities (first class connector for Event Hubs)

- Data Insight

- Flexibility and choice

Spark vs Storm comparison

Spark differs in a number of ways:

- Workload: Spark implements a method for batching incoming updates vs individual events (Storm)

- Latency: Seconds latency (Spark) vs Sub-second latency (Storm)

- Fault tolerance: Exactly once (Spark) vs At least once (Storm)

When to use what?

The following table compared the three technologies. My advise would be to opt for Stream Analytics for quick wins and straight forward scenarios. For more complex and specialized scenarios, Storm might be a better solution. It depends, would be the typical answer to the above question.

Below is a good comparison table, where the ‘*’ typically means “with some limitations”.

|

|

ASA |

Storm |

Spark |

|

Multi tenant service |

Yes |

No |

No |

|

Deployment model |

PaaS |

PaaS* |

PaaS* |

|

Extensibility |

Low |

High |

High |

|

Deployment complexity |

Low |

Low* |

Low* |

|

Cost |

Low |

Med |

Med |

|

Open Source Support |

No |

Yes |

Yes |

|

Programmatically |

SQL* |

.NET, Java, Python |

SparkSQL, Scala, Python, Java… |

|

Power BI Integration |

Yes, native |

REST API |

Yes, native |

Overview of the Cortana Analytics Big Data stack (pt2)

In this session, 4 technologies were demonstrated and presented by 4 different speakers. A very good session to get insights in the broad eco system of HDInsight related services.

We were shown Hadoop (Hive for querying), Storm (for streaming), HBase (NoSQL) and the new Big Data applications that will become available on the new Azure portal soon.

A nice demo, leveraging Hadoop HBase is the Tweet Sentiment demo: http://tweetsentiment.azurewebsites.net/

Real-World Data Collection for Cortana Analytics

This was a very interesting real world scenario that was presented on a real world IoT project (soil moisture). It’s always good to hear people talk from the field. People who have had issues and solved them. People who have the true experience. This was one of those sessions. It’s hard to give a good summary, so I thought to just write down some of the findings that I took away.

- You need to get the data right!

- Data scientists should know about the meaning of the data and sit down with the right functional people.

- An IoT solution should be built in such a way that it suports changing sensors, data types and versions over time.

Data Warehousing in Cortana Analytics

In this session, we got a good overview of the highly scalable offering of Azure SQL Data Warehousing, by Matt Usher. The characteristics of Azure SQL DW are:

- You can start small, but scale huge

- Designed for on-demand scale (<1 minute for resizing!)

- Massive parallel processing

- Petabyte scale

- Combine relational and non-relational data (It is Polybase with HDinsight!)

- It is integrated with AzureML, Power BI and Azure Data Factory

- There is SQL Server compatibility (UDF’s, Table partitioning, Collations, Indices and Columnstore support)

Closing keynote: the new Power BI

James Philips, CVP Microsoft

Power BI is obviously the flagship visualization tool that gets a lot of attention. While there are a lot of shortcomings for a lot of scenarios, it’s indeed an awesome tool that allows to build reports very fast. In this session, we got an overview of the new enhancements of Power BI and some insights in what’s coming next.

Wihle most of these features were known, it was good to get an overview and recap of these features:

- Power BI content packs

- Custom Power BI visuals

- Natural language query

- On premise data connectivity

Some things I did not know yet

- There is R support in Power BI Desktop (plotting graphs and generating word clouds)

- When you add devToolsEnabled=true in the url, there are custom dev tools available in Power BI

- Cortana can be integrated with Power BI.

This was it for the first day. You can expect another blog post on Day 2 and more on Machine Learning from my colleague Filip.

Cheers! Sam

Subscribe to our RSS feed