This year I’ve started working with Kubernetes and one of my main focuses has been scalability and how it impacts your application and infrastructure design. It’s an interesting subject to look into, certainly if you add autoscaling to the mix.

This series will walk you through the basics of the scalability aspects in Kubernetes and give you an idea of what it provides, how you can use it yourself and how you should design your applications to build your own scalable applications.

The series is divided into the following parts:

- Part I – A Primer

- Part II – Cluster Autoscaler

- Part III – Application Autoscaling

- Part IV – Scaling based on Azure metrics

- Part V – Autoscaling is not easy

This first article will explain what components are available in Kubernetes, how you can perform manual scaling activities and how you should design your application so it can be fully scalable.

Scaling Kubernetes

In Kubernetes there are two main areas where it provides scalability capabilities:

- Cluster Scaling – Add and remove nodes to the cluster to provide more resources to run on. This sort of scaling is done on an infrastructure level.

- Application Scaling – Influence how your applications are running by changing the characteristics your pods. Either by adding more copies of your application or changing the resources available to run on.

However, before you can start scaling on an application-level, we first need to make sure that the cluster provides enough resources to satisfy the application scaling needs.

Let’s have a look at scaling our cluster manually.

Scaling Clusters

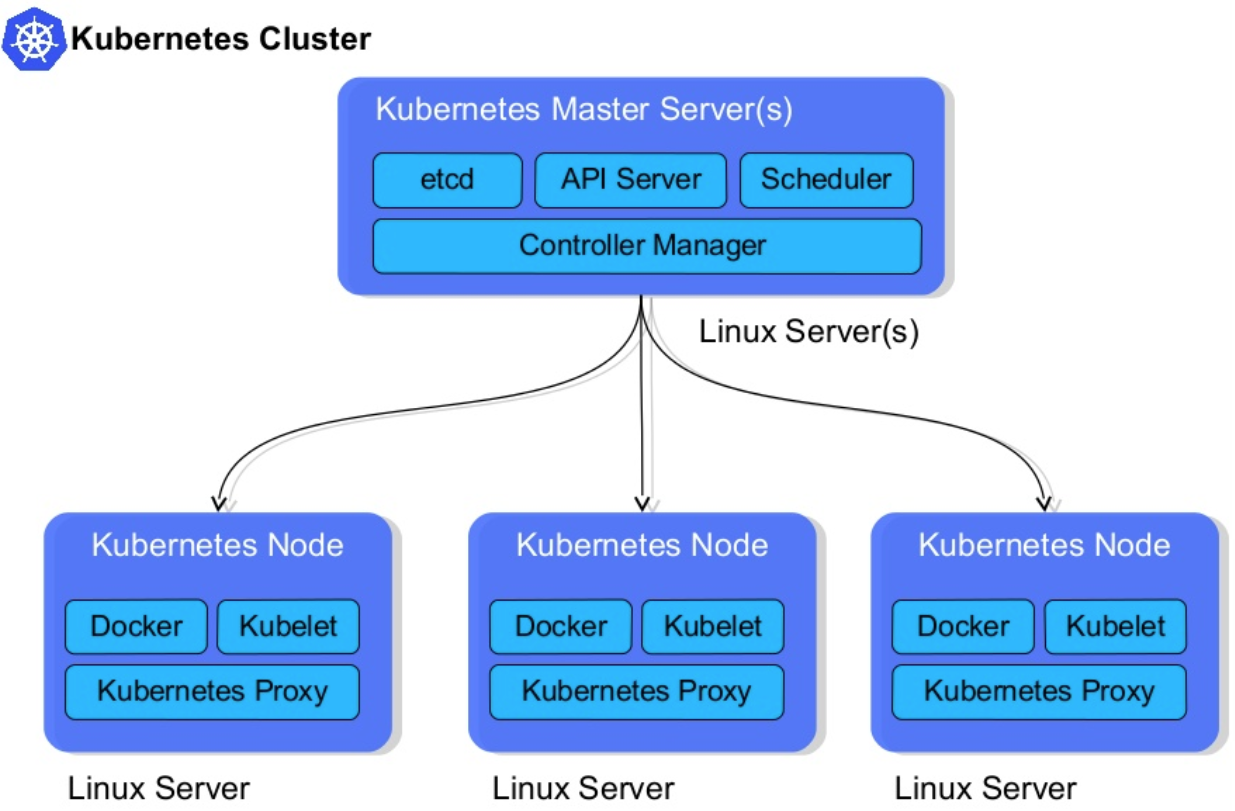

Kubernetes clusters consist of two types of nodes — master and worker nodes.

While the master nodes are in charge of providing a control plane for the cluster, managing the worker nodes and making sure everything runs smoothly inside of the cluster — the worker nodes are what we need to scale.

These worker nodes are the nodes that will run your effective workloads. Every node runs a kubelet which is an agent that is used by the master node to manage workloads running on this node.

Details about how the cluster works are beyond the scope of this post, but you can read more about it here.

Here’s a visual overview by Steve Watt:

In order to provide enough resources for our applications, we need to make sure that our cluster has enough worker nodes to run all the applications on. This is usually done before the cluster has reached their limits. However, when a cluster is over-provisioned, it’s best to remove nodes so that you don’t burn through money unnecessarily.

Manual Scaling

Most cloud providers allow you to easily perform these scaling operations with a single command.

Here is an example of how you scale your Azure Kubernetes Service cluster: (docs)

If you run a bare-metal cluster, it is your responsibility to provision, manage and add nodes to the cluster.

Scaling Applications

Now that we know how to scale our cluster to provide the resources that we need, we can start scaling our applications.

In order to do this, you need to think through and design the application with scaling in mind.

Application Composition

The success of your scalabilities lies in your application composition which is crucial for building high-scale systems.

In Kubernetes, your scalability unit is called a pod. It’s capable of running one or more containers next to each other on the same node.

Pods are deployed and managed when you create a Deployment. This specification will define what a pod is and what characteristics it needs and how many running replicas of them are required. Behind the scenes, the deployment will create a Replica Set to manage all the replicas for the deployment.

However, it is not recommended to create and manage your own Replica Sets. Instead, you should delegate this to the Deployment as this has more of a declarative approach.

Example Scenario

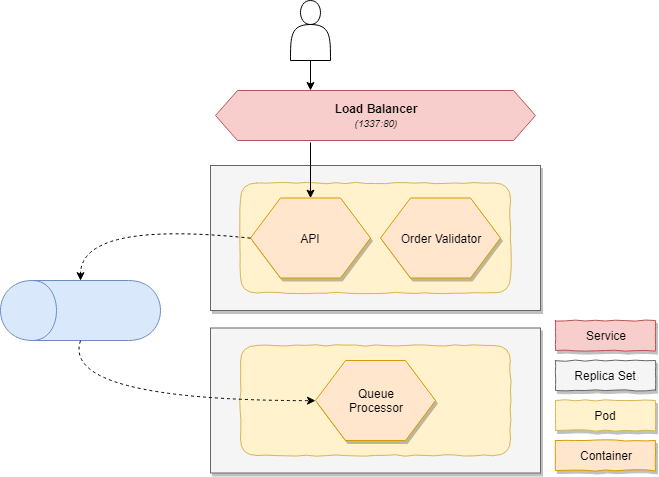

Imagine that we have an order microservices which exposes an HTTP endpoint to provide functionality to our customers. For every new order, we validate and store it. To achieve this, we offload the persistence to a second process which takes our orders of a queue and persist them in an asynchronous manner.

In our scenario we can identify two components:

- An API that is our customer-facing endpoint

- Our order queue worker that is handling and persisting all orders

These two components also have their own scaling requirements — Our API will scale based on CPU and memory, while our order queue worker will scale based on the amount of work that is left in the queue.

This instructs the deployment, which updates the Replica Set, to change the number of requested replicas.

In my reference application, you can find an example of the Order service running on Kubernetes.

It consists of two pods:

- A frontend pod which runs the API container with a validator sidecar

- A backend pod which runs the queue worker

Deploying pods

For the sake of the blog post, we will only deploy the queue processor. If you want to learn more, check out this link at GitHub which explains how you can deploy it yourself by following these instructions.

In order to deploy our processor, we need to describe what it looks like and how it should behave. We want to deploy a pod which is running the tomkerkhove/containo.services.orders.queueprocessor Docker image.

Next, we want it to run three exact replicas of our pod and use rolling updates as our deployment strategy.

It uses the following deployment:

If you want to learn more I recommend reading this blog post by Etienne Tremel.

We can easily deploy our deployment specification by using kubectl:

Once that’s finished you can view the state of your deployment by running:

You can find more information about creating deployments in the Kubernetes documentation.

Scaling pods

Once your deployment has been created, manually scaling the amount of pods instances is simple:

That’s it! The deployment will instruct the replica set to change the desired amount of instances which will request Kubernetes to either provision more instances, or instruct running pods to shut down.

If you get the latest status of your deployment again, you’ll notice that it has changed the desired amount and is gradually adding new pods:

Conclusion

We’ve learned that Kubernetes requires you to scale your cluster to provide enough resources for your application. Applications also need to be designed to be scalable and we’ve used an example scenario which shows you a potential approach to design, deploy and scale it.

While we are now able to manually scale our cluster and applications, you don’t want to get into a situation where you have to monitor them night and day and having to wake up in the middle of the night to scale them.

In our next post, we will have a look how we can automatically scale our Kubernetes cluster to ensure that it always provides enough resources for our applications.

Thanks for reading,

Tom.

Subscribe to our RSS feed