Azure Event Hubs for Apache Kafka is now generally available. Users of the streaming platforms Event Hubs and Apache Kafka will now get the best of both worlds – the ecosystem and tools of Kafka, along with Azure’s security and global scale. Furthermore, by bringing two powerful distributed streaming platforms together, users can have access to the breadth of Kafka ecosystem applications without having to manage servers or networks.

Apache Kafka

For those unfamiliar with Kafka, it is a distributed streaming platform, designed for handling real-time data feeds. It is an open-source stream-processing software platform developed by the Apache Software Foundation, written in Scala and Java. It consists of several components:

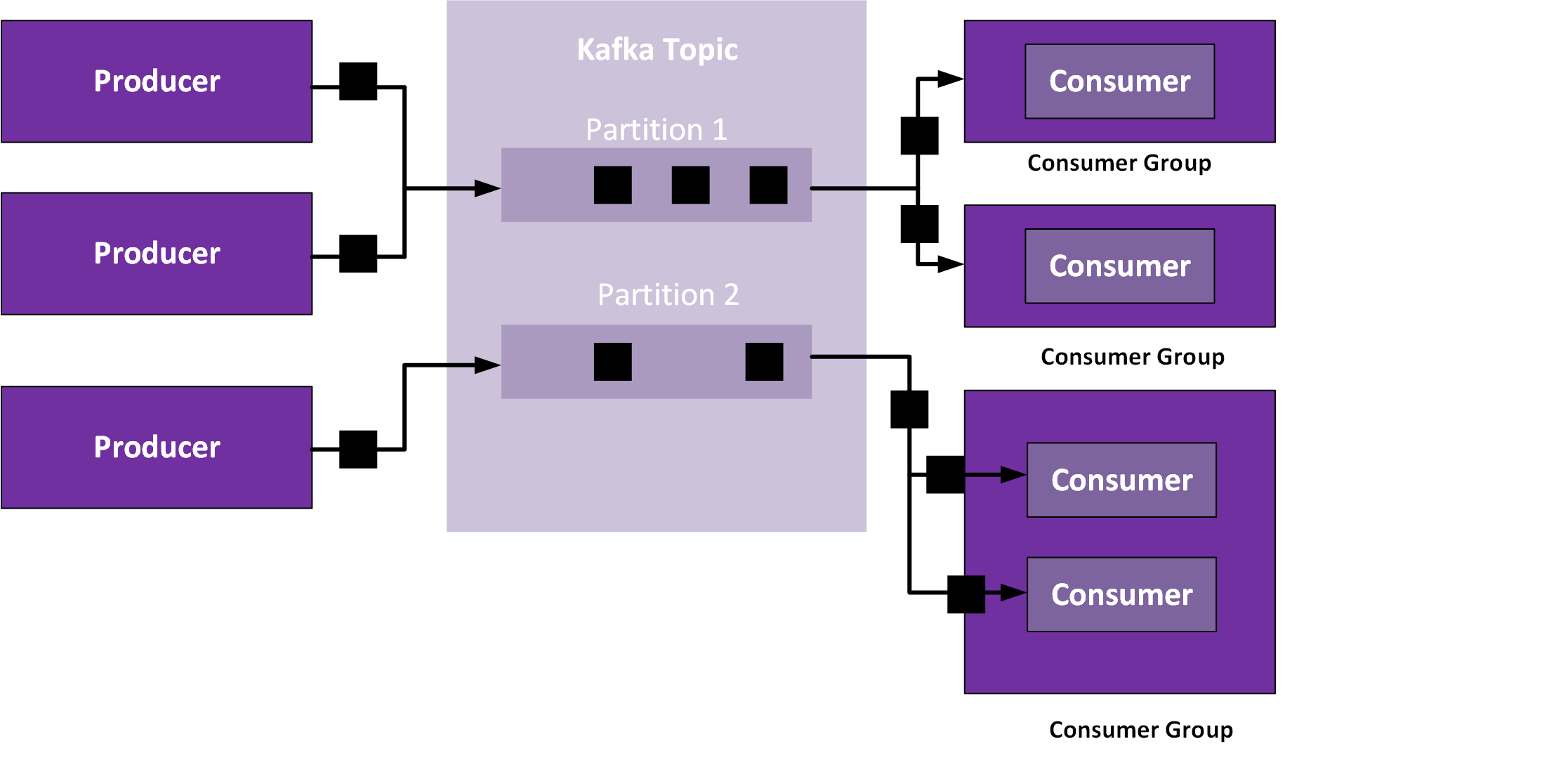

- Topics– this is a conceptual division of grouped messages.

- Partitions– enabling parallelism. You can split a topic into 1 or more partitions. Subsequently, each message is then kept in an ordered queue within that partition (note that messages are not ordered across the partitions).

- Consumers– 0, 1 or more consumers can process any partition of a given topic.

- Consumer Groups– are groups of consumers – commonly share the load. If a consumer group is consuming messages from one partition, each consumer in a consumer group will consume a different message. You can see consumer groups a way to level the load between consumers.

- Replication– you can set the replication factor on Kafka on a per topic basis – enhances the reliability if one or more servers fail.

Azure Event Hubs

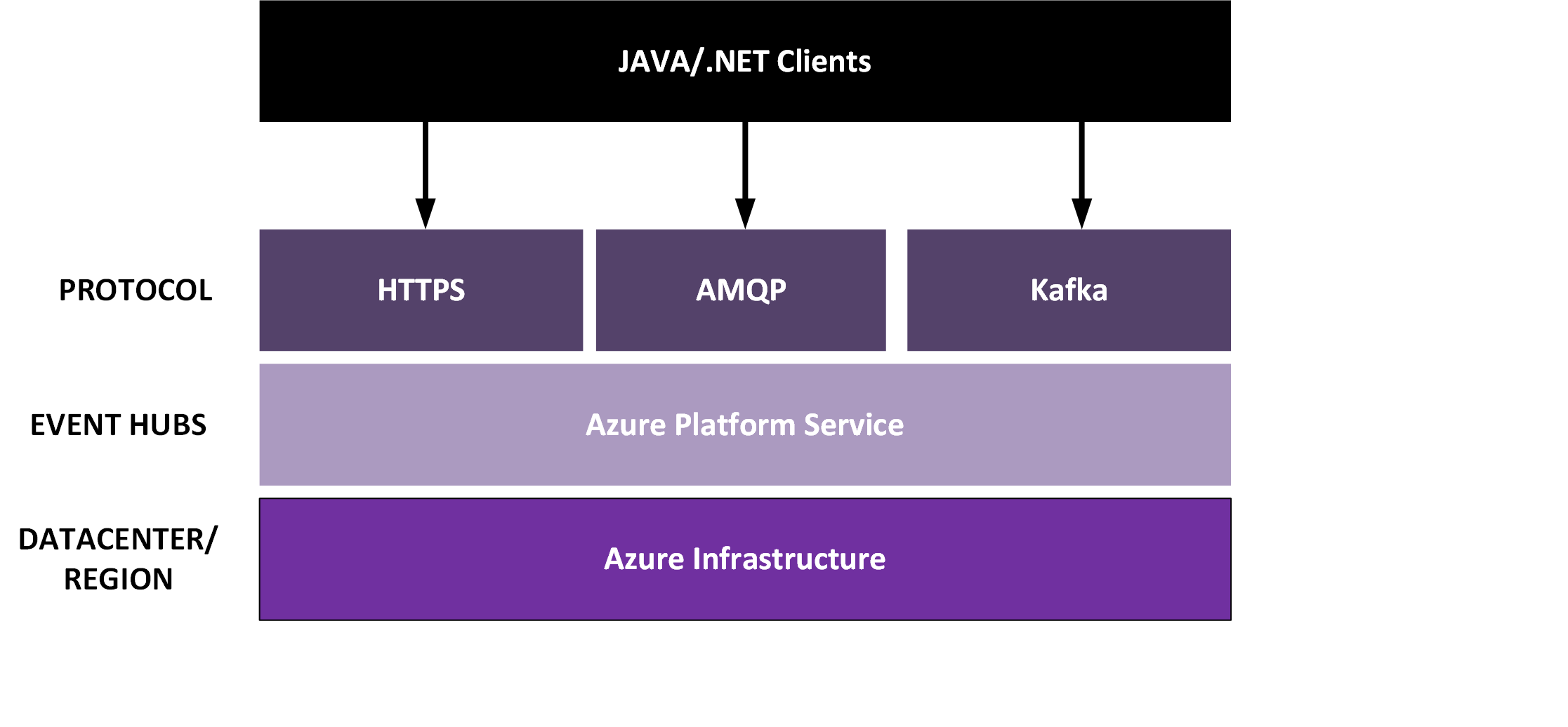

Azure Event Hubs are an event ingestion service in Microsoft Azure and provides a highly scalable data streaming platform. Microsoft manages this service – thus no management of any infrastructure is required by its users. Furthermore, you only pay for what you use by:

- Ingestion per million events

- Throughput Units (1 MB/s ingress, 2 MB/s egress)

- Retention

- Enabling capture feature

- Brokered connections

- Additional brokered connections

- Consumer Groups

- Message Size (256 Kb or 1 Mb)

- Leveraging the Kafka endpoint

You can find more details on pricing for Event Hubs on the pricing page.

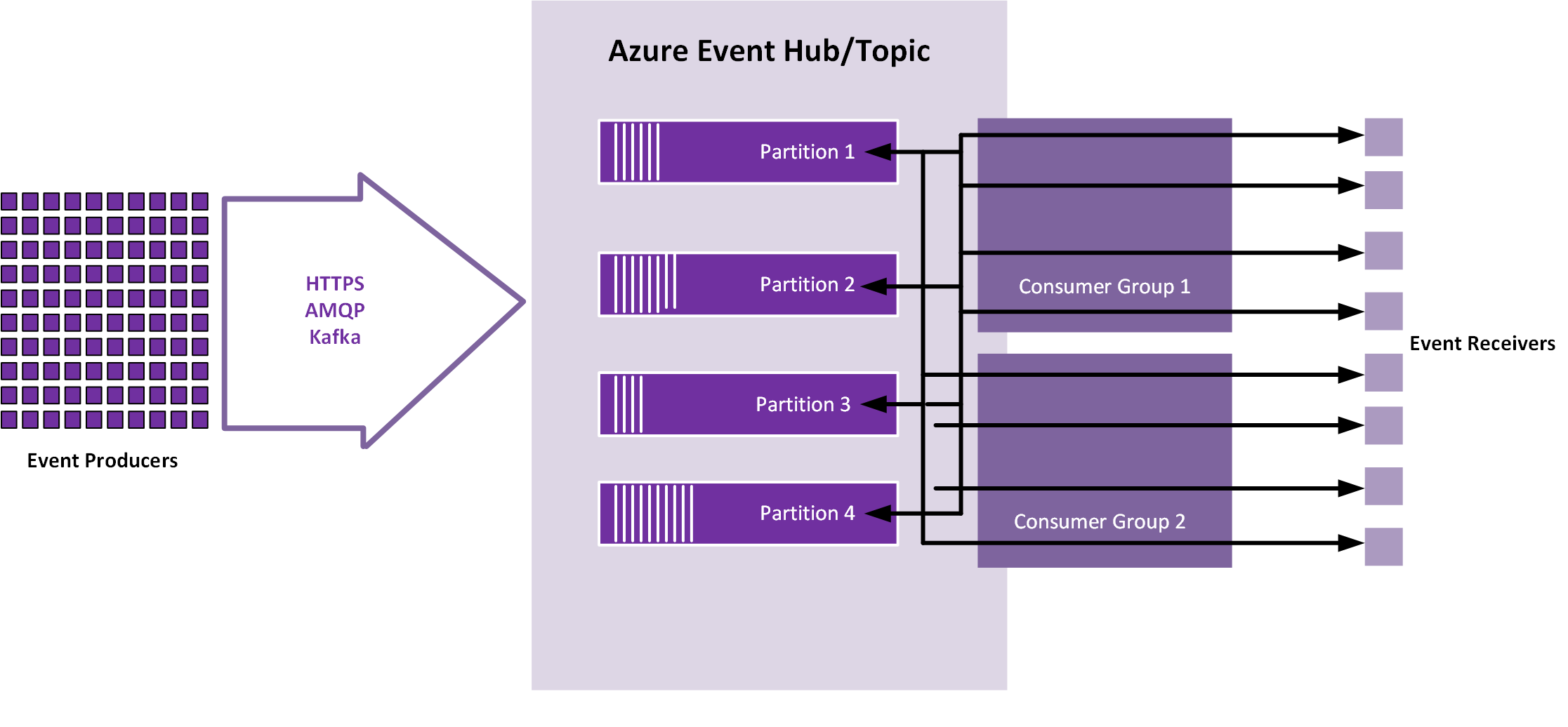

Azure Event Hubs have the following components:

- Event producers: Any entity that sends data to an event hub. Event publishers can publish events using HTTPS or AMQP 1.0 or Apache Kafka (1.0 and above)

- Partitions: Each consumer only reads a specific subset, or partition, of the message stream.

- Consumer groups: A view (state, position, or offset) of an entire event hub. Consumer groups enable multiple consuming applications to each have a separate view of the event stream, and to read the stream independently at their own pace and with their own offsets.

- Throughput units: Pre-purchased units of capacity that control the throughput capacity of Event Hubs.

- Event receivers: Any entity that reads event data from an event hub. All Event Hubs consumers connect via the AMQP 1.0 session, and events are delivered through the session as they become available. All Kafka consumers connect via the Kafka protocol 1.0 and later.

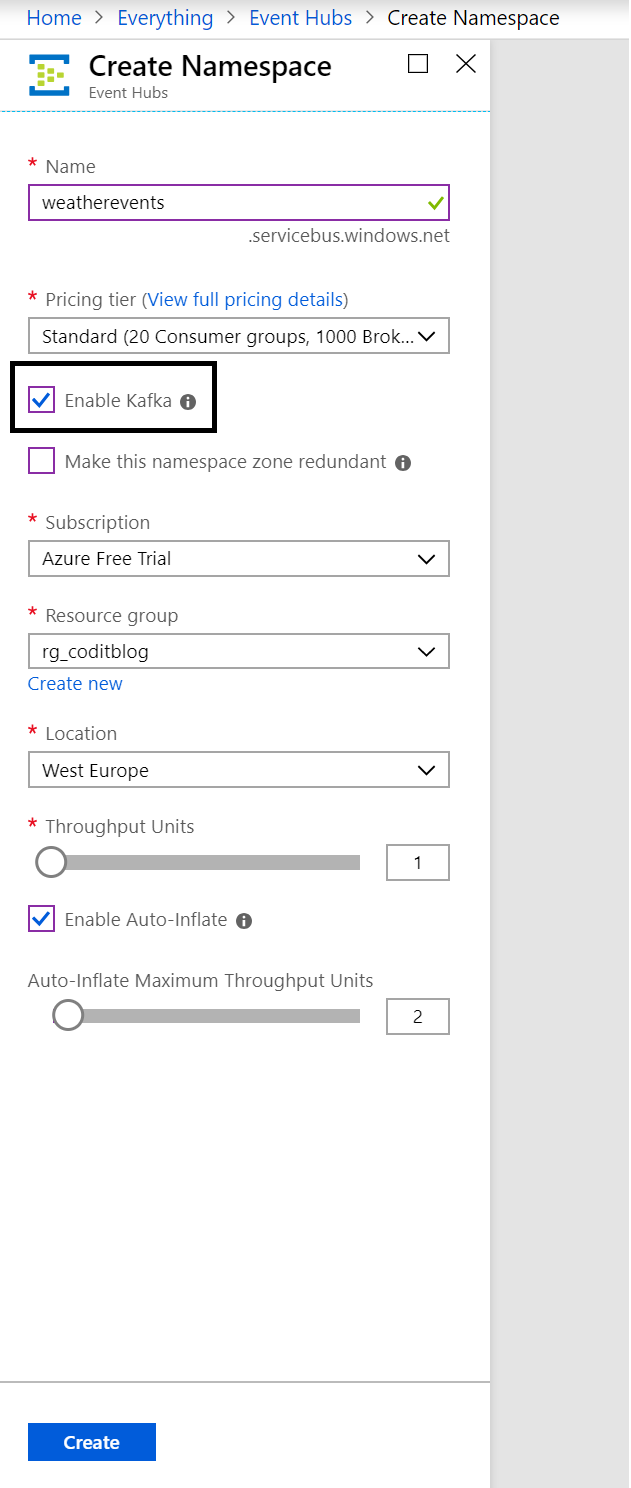

Enable Kafka for Event Hubs

Earlier this year Microsoft released a preview of the integration of Kafka with Azure Event Hubs by providing a Kafka Endpoint. With the endpoint, users can stream their event data into it. Furthermore, the GA of Azure Event Hubs for Apache Kafka offers new capabilities to enable users to start streaming events from applications using the Kafka protocol directly into Event Hubs, by merely changing a connection string.

Kafka is available in the standard and dedicated tier of Azure Event Hubs. By provisioning an instance (namespace) of Event Hubs, you can enable the Kafka capability. Moreover, by allowing Kafka, you will enable your Event Hubs Namespace to understand Apache Kafka’s message protocol and APIs natively.

Once your Event Hubs instance is available, you can connect to an Event Hub through using the Kafka messaging protocol. This protocol uses a binary protocol over TCP. For more details, see the Kafka protocol guide. A Kafka client can communicate with an event hub by merely changing the configuration to the Kafka endpoint. In the specific configuration, you change the parameter to the Kafka endpoint – the fully qualified name of the event hub namespace. Also, you need to set the security mechanism to PLAIN and the event hub connection string as a password for a Java client. Below you see the configuration:

bootstrap.servers={<strong>YOUR.EVENTHUBS.FQDN</strong>}:9093

security.protocol=SASL_SSL

sasl.mechanism=PLAIN

sasl.jaas.config=org.apache.kafka.common.security.plain.PlainLoginModule required username=”$ConnectionString” password=”{<strong>YOUR.EVENTHUBS.CONNECTION.STRING</strong>}”;

The connection string looks like:

Endpoint=sb://myeventhub.servicebus.windows.net/;SharedAccessKeyName=RootManageSharedAccessKey;SharedAccessKey=OR1FRg2VK3hPoDAEigpqcWDKPqTHcvFlyxHdtF3RQks=

You can also try Kafka for Azure Event Hubs using a .NET client (producer and consumer), see the Accessing Event Hubs with Confluent Kafka Library blog post from Wagner Silveira and the Middleware Friday episode Azure Event Hubs for Kafka Ecosystems.

The Mimicking strategy

The difference between Azure Event Hubs and Apache Kafka are minimal. Moreover, conceptually both have similar architectures. Therefore, by enabling the Kafka protocol for Event Hubs allows users to leverage the same data stream technology in the cloud, managed by the cloud provider. By mimicking Kafka by offering an endpoint, Kafka applications can leverage a streaming platform in the cloud. Furthermore, no management of any clusters is necessary.

Microsoft is applying the same strategy for their CosmosDB service, by offering a MongoDB, and Cassandra API – thus lowering the barrier for open source applications to use a cloud database service. Again, the users do not have to manage anything.

Cheers,

Steef-Jan

Subscribe to our RSS feed