Codit is back in London for Integrate 2018! We are very proud to once again have the biggest delegation at Integrate, representing the biggest Microsoft integration company! This blog post was put together by each and every one of our colleagues attending Integrate 2018.

Keynote – The Microsoft Integration Platform – Jon Fancey

Jon Fancey, Principal Program Manager at Microsoft, opened the conference with the Keynote session focusing on change and disruption. He took us on a journey through time explaining the different phases of technology changes he experienced and how important it is to maintain a critical view on technologies that are adopted within organizations. It’s important to constantly keep questioning and assess your methods and to adapt to new technology changes.

“If you don’t disrupt, someone else will disrupt you. The other guy doesn’t care if you like change or not.”

Jon called Matthew Fortunka to the stage. Matthew is the Head of Development for Car Buying at confused.com. He presented a real-world example of how Confused.com leveraged Microsoft’s Azure cloud to transform and optimize their business model, to cause a disruption to the way people buy cars and car insurance.

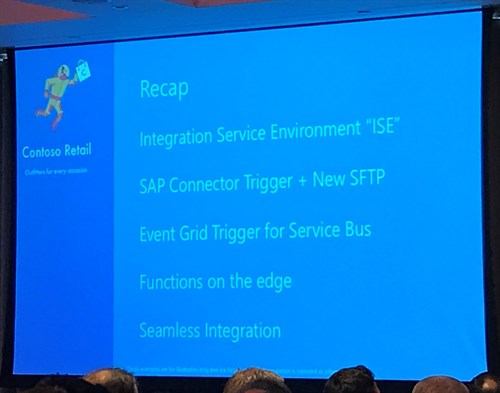

Next up – demo time for the Microsoft Team. In an extensive 4-part demo we were shown an end-to-end example of a backorder being processed for their imaginary Contoso Retail shop. By chaining most of the Microsoft Integration building blocks together they revealed some interesting new features to come:

- Integration Service Environment: VNET integration for Logic Apps! Allowing connection of Logic Apps to resources hosted on Azure VMs – without the use of the On-Premise Data Gateway.

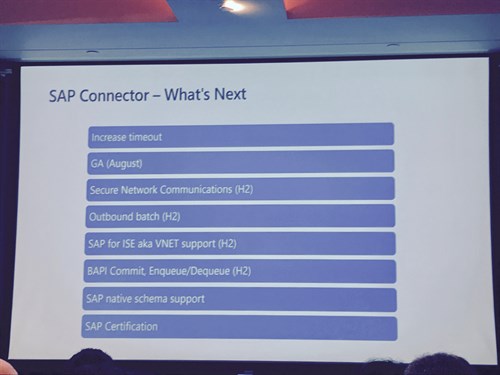

- SAP Trigger for Logic Apps: Allowing SAP messages to trigger a Logic App. The team confirmed this is an event based trigger with no polling used. Together with the current existing SAP connector, the SAP Trigger enables bi-directional communication between SAP and Logic Apps. It is available in private preview as of today.

Introduction to Logic Apps – Kevin Lam & Derek Li

The first ‘real’ session of the day was renamed to ‘Be an Integration Hero with Logic Apps’ by Kevin Lam and Derek Li of Microsoft.

Staring with an introduction to Logic Apps they explained there were some 200 connectors available, and reviewed the basic building blocks used to create a Logic App such as Triggers & Actions. A demo followed in which they showed how easy it is to build a Logic App, starting off with ‘Hello World’, and moving to showing a weather forecast within a few clicks.

The manageability of Logic Apps was then discussed using Visual Studio, B2B, Security followed by a brief explanation of Rule based alerts.

In a second scenario we were given a demonstration on how to build a Logic App for a more complex scenario in which they received a .jpg of an invoice to blob storage, used OCR, then called a Function to process the text, and send a mail when the total amount was over $10.

Finally new features for Logic Apps were detailed: China Cloud, Mocking data for testing, OAuth request triggers, Managed Service Identity support, Key Vault support and output property obfuscation.

An interesting presentation with a focus on how Logic Apps simplify the development process and how quickly Logic Apps can be implemented.

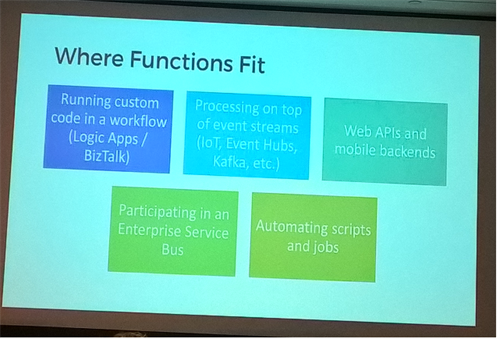

Azure Functions role in integration workloads – Jeff Hollan

In the third session of the day, Jeff Hollan took us into the realm of using Azure Functions in integration workloads.

Jeff started off by explaining some key concepts in Azure Functions (Function App, Trigger, Bindings), and gave us an overview of where you can develop Azure Functions: everywhere! (Portal, Visual Studio, VS Code, IntelliJ, Notepad…). He gave a small demo on how to quickly create a Azure Function in Visual Studio. These applications which you can write anywhere, you can also run anywhere.

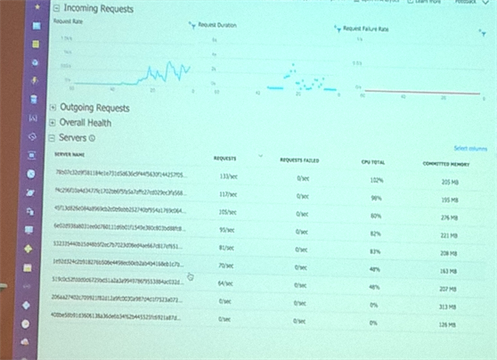

The advantage of Azure is of course that it’s possible to auto-scale instances as needed as was demonstrated by throwing 30.000 requests (1000/sec for 30 seconds) to the Azure Function that was written in the demo before.

To end, Jeff gave some Tips and Best Practices for Azure Functions, covering various domains:

Instance and Resource management, how to properly instantiate some variables (e.g.: SQLConnection) and how to finetune the host.json file to manipulate how many functions are executed in every instance.

To close we were given some insights in Durable Functions, by implementing the Durable Task Framework there is now support for long running processes. This makes it a great new feature, which can replace Logic apps in some scenarios, and work alongside Logic apps in others!

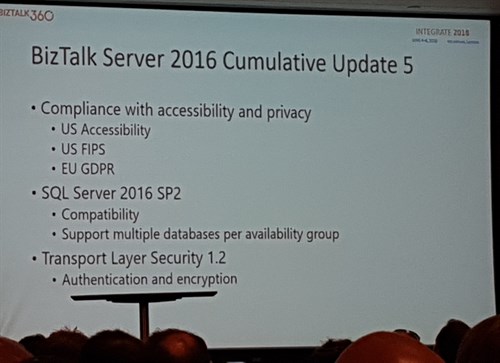

Hybrid integration with Legacy Systems – Paul Larsen & Valerie Robb

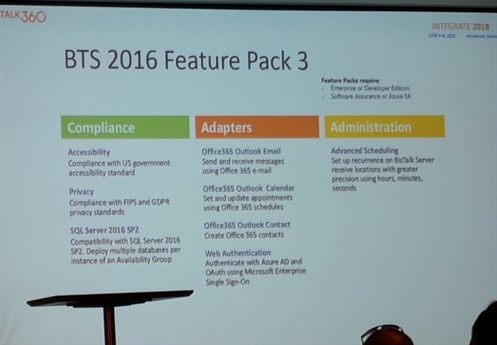

In this fourth session of the day Paul Larsen & Valerie Robb took us on a journey through the new upcoming features of BizTalk 2016.

It was announced Cumulative Update 5 will be compliant with both US government accessibility standard and GDPR.

It will have compatibility for SQL Server 2016 SP2.

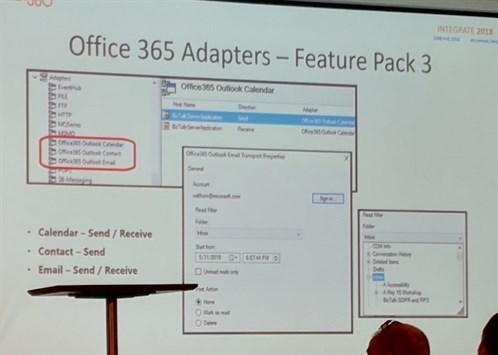

Feature Pack 3 will have support for advanced scheduling and Office365 adapters:

- Office365 Outlook Email send and receive

- Office365 Outlook Calendar

- Office365 Outlook Contacts

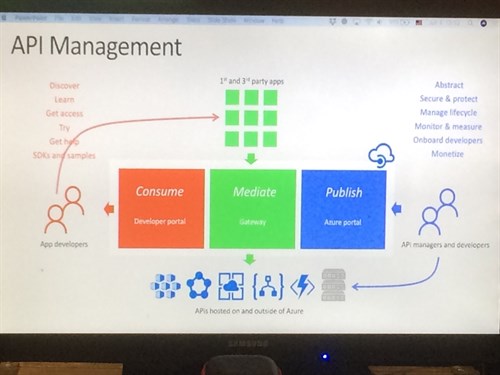

API Management overview – Miao Jiang

In this session, Miao Jiang discussed the rise of API’s and the wide variety of use cases for API’s. Popular customer use cases he cited include enterprise API catalog, customer and partner integration, mobile enablement and IoT.

API Management, Miao advised, could be used to decouple consumers from backend APIs, manage the lifecycle, monitor and measure and monetize APIs. Consumers meanwhile can use the developer portal to discover, learn and try out APIs.

Besides this he noted that VNETs or ExpressRoute deliver connectivity to on-premise APIs or APIs in another cloud environment while policy documents are used to control the behavior of groups of APIs, such as rate limiting, caching, transformation and many more.

In his demo we saw the usage of policy expressions and named values.

Announcements that were made include Application Insights integration, versions and revisions, capacity metrics, auto scale and Azure Key Vault integration. A very nice overview of API Management. Looking forward to the in-depth sessions that will follow!

Eventing, Serverless and the Extensible Enterprise – Clemens Vasters

Clemens Vasters, the lead architect at the Azure Messaging team, entertained us with an enlightening architectural talk about “Event Driven Applications.” In his talk he stressed that services should be autonomous entities with clear ownership. He focused on choosing the right service communication protocol for the job. He identified two types of data exchanges:

- Messaging: includes an intent/expectation by the message sender (e.g., commands, transfers). Azure Service Bus is well-suited for this type of data exchanges.

- Eventing: is mostly about reporting facts, telling what just happened.

- Discrete events are independent and immediately actionable (e.g., fire alarm). Azure Event Grid is a good match for these type of events.

- Event series should be analyzed first, before you can react to them (e.g. temperature threshold). This analysis could be done through Azure Stream Analytics that is wired up to Event Hubs, which allows state full and partitioned data consumption. Event Hubs now supports the Apache Kafka protocol.

Next, Clemens explained the efforts done on standardizing how cloud events should be handled, through the CNCF Cloud Events specification. Many vendors have committed to this new standard, so fingers crossed for wide adoption. Event Grid added recently native support for CNCF Cloud Events.

The Reactive Cloud: Azure Event Grid (Eventing and streaming with Azure) – Dan Rosanova

The amount of data that is being processed in the cloud is increasing every day and in his talk, Dan focused on the application of Azure Event Grid and Azure Event Hub to deal with this growth. Het presented the two technologies as great solutions, but which to use when depends on context.

After introducing Azure Event Grid, a platform for ingesting data from many providers, he mentioned that the product has been extended with features like hybrid endpoints, a dead letter endpoint, and an option to limit the amount of delivering retries.

The second part of his talk focused on Azure Event hub, including the possibility to use Azure Time Series Inside: a module for viewing, monitoring and searching in streams. A new asset is the ability to consume data from Kafka environments.

Dan concluded his talk by stating that Azure Event Grid should be used for the fan in of data, whereas Azure Event Hub is meant for fan-out purposes.

Enterprise Integration using Logic Apps – Divya Swarnkar / Jon Fancey

Jon Fancey and Divya Swarnkar took the stage with a session on Enterprise Integration with Logic Apps and walked us through the improvements made in the past year – and what’s coming up next.

Following the earlier announcement of the SAP trigger for Logic Apps being in private preview a short demo showed this new trigger in action. The trigger contains an optional field where the message type can be specified (via the namespace we know from integrating SAP with BizTalk) to make the trigger listen for a certain type. When left empty the trigger will fire for every message sent to a specific SAP ProgramId.

The logic apps team will also supply us with an action capable of generating the SAP schemas and are working on a way to allow those schemas to be directly stored in the Integration Account.

Jon walked us through the improvements made on mapping. From liquid templates, which was announced last year, over custom assemblies, to XSLT 3.0 support.

A demo of the OMS template for Logic Apps management showed us the bulk resubmit feature and tracked properties (that can now be configured in the designer instead of codeview only). These functions have been available for some time, but Divya did mention that they are working on a Bulk Download feature and a way to identify runs that have been resubmitted.

They concluded the session with an overview of what’s coming next for Enterprise Integration. Specifically the announcement the Integration Account will support a consumption based pricing model in the future. This was met with a round of applause!

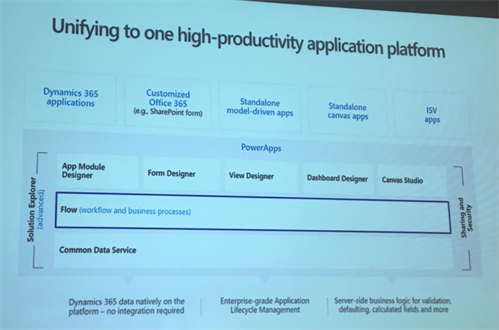

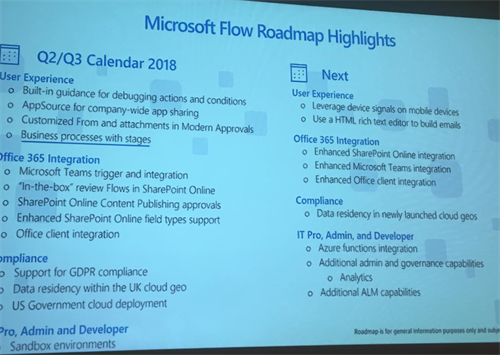

Microsoft Flow in the Enterprise – Kent Weare

In the last session of day 1 Kent Weare, Principal Program Manager Microsoft Flow, presented about Flow. Microsoft Flow is an offering in the Microsoft Cloud and sits on top of Logic Apps. The difference with Logic Apps is that you have less control of the flow definition, for instance, you cannot access a code-behind page. Office 365 users have access to Flow, so definitely explore it yourself!

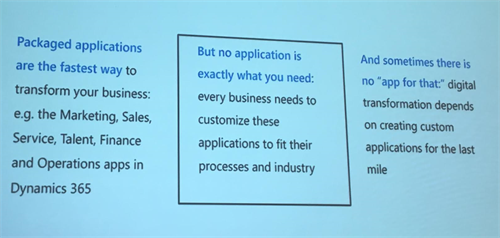

Application Productivity (BAP). In the center of BAP, you will find PowerApps, and Power BI targetted for the power users. Flow and the Common Data Services, support these applications for data in- and out, or have a business process in place. On top of Flow, you can build applications for Dynamics, SharePoint (flow is a replacement of workflows in SharePoint), or build a standalone application.

With Flow, application creation is democratized for end users, and to fill the gap for them between packaged application or missing features in these applications. Furthermore, the democratization is to have less involvement from IT for applications that have less or no impact on critical IT processes.

In Kent’s session, he showed how to point-and-click a business process in a designer, and run it. In total there were four demo’s for various scenarios he showed – Flow is mostly THE Automation tool around Office365 applications

- Change and Incident Management using Teams and Flow Bot

- Flow integration with Excel

- Intelligent Customer Service

- Hot dog or not hot dog

After the demo, Kent wrapped his session up with sharing the future roadmap of Flow.

Thank you for reading our blog post, feel free to comment or give us feedback in person.

This blogpost was prepared by:

Bart Cocquyt

Charles Storm

Danny Buysse

Jasper Defesche

Jef Cools

Jonathan Gurevich

Keith Grima

Matthijs den Haan

Michel Pauwels

Niels van der Kaap

Nils Gruson

Peter Brouwer

Ronald Lokers

Steef-Jan Wiggers

Tim Lewis

Toon Vanhoutte

Wouter Seye

Subscribe to our RSS feed