Architecting Highly Available Cloud Integrations – Richard Seroter

Richard starts out by stating that creating a cloud integration is similar to sticking pieces together on a model plane; the strength and flexibility of the glue determine the resilience of the model.

In a (cloud) integration system this glue would be the messaging and eventing infrastructure connecting the other system parts and allowing for recovery and retry.

Alongside recovery, the ability to scale out and introduce redundancy also defines the resilience of the system. Many moving parts in Azure scale out by design but it is still essential to architect your applications to take as much advantage of this as needed and limit some users (throttling) when required. Although your integration solution can be able to scale out to the max and is flexible as rubber, it might need you to introduce load leveling to make sure back-end systems do not get flooded when you’re hitting a tremendous amount of messages.

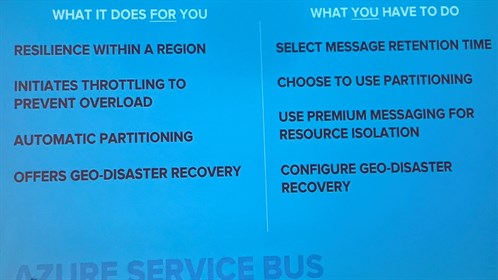

Zooming in on several services, like SQL Server, CosmosDb, Service Bus, Event Hubs, LogicApps, and Functions, Richard states the capabilities regarding scaling and availability native to the platform and the options still open for you to provide.

In general, one could say that all the capabilities for scaling and resilience are there, but it is up to you to take advantage of it by making choices in configuration and architecture.

He also states the fact that control is not yours in a lot of cases; Microsoft will determine if, when and where a condition is classified as a disaster, and you should be prepared to react when this happens.

All in all, it comes down to knowing what components in your architecture have what capabilities regarding availability and scaling and to decide if and how you will make use of these capabilities.

The primary takeaway from this session, however, is still the golden rule; design for disaster by being able to rebuild your environment fast in a different region by eliminating manual deployment and provisioning steps. In Azure that would mean using the ARM to the max.

Richard ended his talk with some golden tips:

- Always integrate with high available endpoints

- Clearly understand what services failover together

- Regularly perform chaos testing

Real-world Integration Scenarios for Dynamics 365 – Michael Stephenson

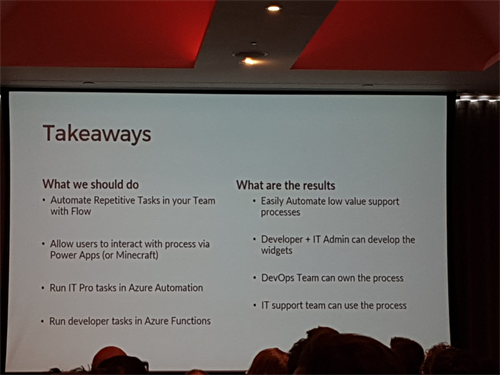

Michael Stephenson delivered the second session on the last day, explaining how Microsoft Flow can simplify DevOps tasks and how these flows can be extended to any user within the team. After giving a brief background about himself, he moved on to explain the “DevOps Wall” and daily tasks related to the role.

He based his example on how “Azure AD B2B Setup” can be used for Multi-Region Group solutions. He demonstrated a “major release user creation” was an Active Directory Admin pushes a csv to a PowerShell script to add users to the AD and email invite notifications.

Michael explains how non-technical teams or individuals might be reluctant to take on such tasks with the amount of responsibility required, especially if they lack the knowledge of what is exactly happening in the background. Michael continues to demonstrate how Microsoft Flow simplifies the process for non-technical people, acting as a black box by hiding the individual processes and resources used by the Flow. He then displays the process on screen, broken into tasks to show how many steps are required to complete the action above.

He then did a demo using Minecraft, simulating a situation where a user was unable to log in due to not having access. He then proceeded to add the user and granted him access through Minecraft, which it then triggered a Microsoft Flow to complete the process and allow access to the user.

Michael explained how crucial it is to be resourceful and keep things efficient when building tasks, which will ultimately cost money for the company. As a key point, Michael insisted on the importance of automating repetitive tasks and how Flow can help you achieve this efficiently, as a result, reducing work overhead and costs.

Using VSTS to deploy to a BizTalk Server, what you need to know – Johan Hedberg

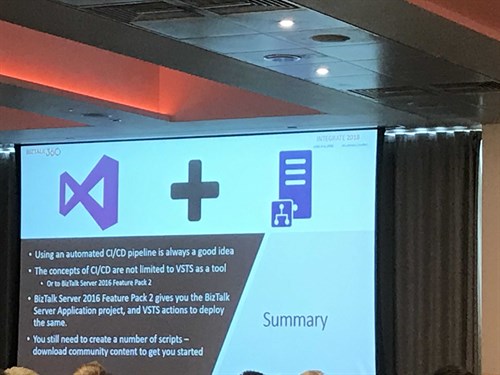

Johan Hedberg showcased the ALM capabilities that now come with the BizTalk 2016 feature packs.

In a hands-on practical session, he started by creating and configuring a BizTalk application project using the BizTalk. Deployment Task in VSTS CI. Later he did all the VSTS plumbing for obtaining a usable build agent, and he showed us how to set up the build and release pipelines, even including unit tests!

Support for Tokenized binding files, pre- and post-processing steps support in VSTS.

This session was excellent for everyone wishing to get started with CI/CD for BizTalk.

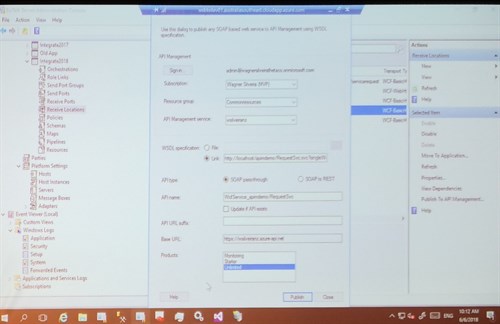

Exposing BizTalk Server to the World – Wagner Silveira

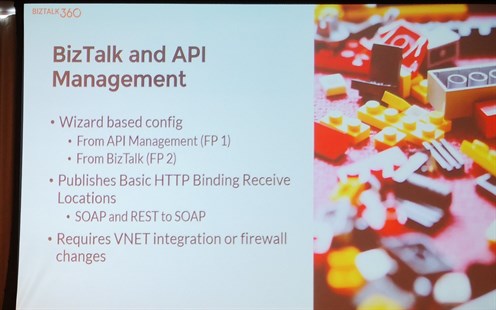

In this session, Wagner Silveira talked about the different ways to expose BizTalk endpoints to the outside world using Azure technologies. Reasons why you would want this, include consuming on-premise resources, offering a single gateway and extending cloud workflows.

Message exchange options like queues and topics, file exchange or e-mail are undoubtedly possible, but Wagner focused on exposing HTTP endpoints.

For this, he went over the following options:

- Azure Relay Services

- Logic Apps

- Azure Function Proxies

- Azure API Management

Each of these come with different security and message format possibilities.

To make an educated decision on which Azure services to use, you should identify your needs and know about the possibilities and limitations of each option.

Then Wagner demoed exposing endpoints using Relay Services and API Management.

Anatomy of an Enterprise Integration Architecture – Dan Toomey

Dan Toomey gave a more architecture oriented presentation for his session at Integrate 2018.

Typical businesses don’t have one monolithic application, but multiple, and sometimes even hundreds of applications to run. Although this decreases the cost of change, it increases the cost of integration. It’s a challenge to find the sweet spot.

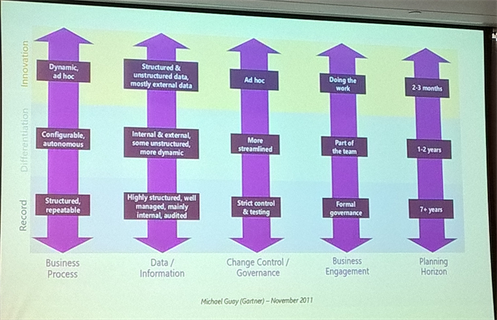

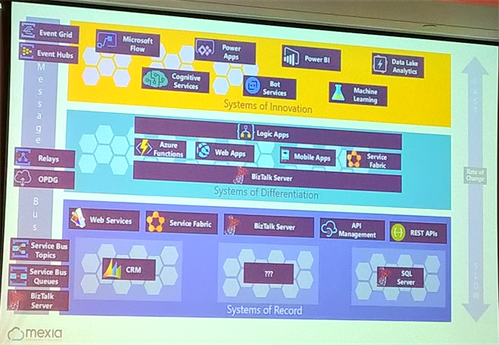

These different applications can be split up in layers, on the bottom there is the Systems of Record (CRM, SQL, …) layer for transaction processing and master data, this is the least common changing layer. One up is the Systems of Differentiation layer, where processes unique to the business can be found. Topping it all off you have the Systems of Innovation, where the fast-changing new applications and requirements lay. These different layers have different properties concerning Business Processes, Data/Information, Change Control/Governance, Business Engagement, and Planning timelines.

From an integration perspective, the differences can be found in the Rate Of Change, API Design, Change Control and Testing Regime. The different layers havedifferent options on how to integrate them; you’ll mostly use BizTalk and API Management in the Records-layer, BizTalk and Logic Apps for the Differentiation, and Flow/PowerApps/PowerBI for the Innovation layer. These all have different characteristics which should be considered.

Dan ended the session with some considerations you should keep in mind to integrate better. Consider how your applications will be integrated, make sure your Systems of Record layer is stable, limit customization in Systems of Record, consider using canonical data models, loosely couple inter-layer communications, and allow room for innovation.

Unlock the power of hybrid integration with BizTalk Server and webhooks! – Toon Vanhoutte

Webhooks is a relatively new messaging pattern that may replace synchronous request-response and asynchronous polling techniques.

Using webhooks is not only faster, but it also allows for improved extensibility, and it requires no client-side state registration.

In his Talk, Toon discussed the design considerations and implementation details of webhooks in combination with BizTalk Server and Azure Event Grid.

In his demos, BizTalk Server publishes or consumes events that are sent or received through Azure Event Grid, and Azure Event Grid is responsible for managing the webhook registrations.

For event publishing scenarios, it is essential to implement retry policies, fallback mechanisms, proper security settings, and continuous endpoint validation.

When consuming events, there should be focused on the scalability, reliability of connections and on the fact that the sequence of incoming messages cannot be guaranteed. Toon pointed out that especially this last point is often forgotten.

Refining BizTalk implementations – Mattias Lögdberg

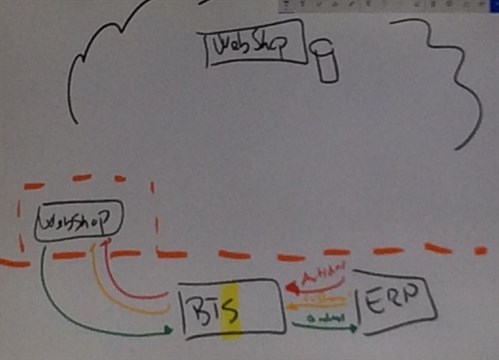

In this session, Mattias gave a real-life presentation of how he modernized an existing architecture to leverage cloud components.

The webshop was moved to the cloud which brought some challenges to connect the webshop to on-premise systems like the ERP system.

Azure services that were introduced including table storage, API Management, Logic Apps, Azure Functions, and DocumentDb. ServiceBus queues and topics, as well as DocumentDb, were used to throttle the load on the ERP system.

Monolithic applications were transformed to a set of loosely coupled components, and the complex framework was turned into microservices.

BizTalk is just as vital as before, but there are now more tools being added to the toolbox instead of just BizTalk to execute enterprise-grade integration.

Focused roundtable with Microsoft Pro Integration Team

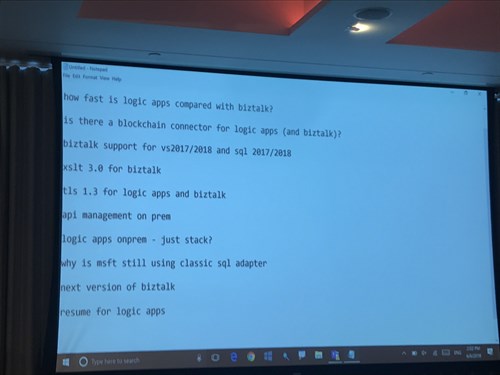

Last part of the day was the roundtable.

And some of the questions asked are below.

Thank you for reading our blog post, feel free to comment or give us feedback in person.

This blogpost was prepared by:

Bart Cocquyt

Charles Storm

Danny Buysse

Jasper Defesche

Jef Cools

Jonathan Gurevich

Keith Grima

Matthijs den Haan

Michel Pauwels

Niels van der Kaap

Nils Gruson

Peter Brouwer

Ronald Lokers

Sjoerd van Lochem

Steef-Jan Wiggers

Toon Vanhoutte

Wouter Seye

Subscribe to our RSS feed