Intro

At Codit we always want to learn and train our colleagues in new technologies. And there is no better way to train than working on an actual (internal) project. Our latest project is the UEFA 2021 challenge. Our first blogpost in this series describes the general setup. In this post, we will zoom in on the AI part.

More and more, Artificial Intelligence is becoming an integral part of our work as IT specialists. This is also true for Codit, where we use AI for several new projects. We are using our UEFA 2021 challenge to introduce AI to colleagues who have not been exposed to AI yet. Being an Azure focused company, Azure Machine Learning was the natural choice.

Using AI

Azure Machine Learning Studio (Azure ML) is an environment suitable for both novices and experienced AI workers. For the novice, there is a GUI environment where you can graphically connect tasks. Examples of tasks are loading data, cleaning & transforming data and training a model. There are a lot of models to start with, from general purpose models for regression and classification, to more specialized models for text analytics, images processing and more.

For the experienced user, there are a lot of possibilities because of different kind of AI libraries, like PyTorch, Tensorflow, etc. They can use Python and Jupyter notebooks. The latter is a web-based Python environment.

But there is more to successful AI: collaborating on (large) datasets, keeping track of experiments and what datasets they use, deploying models so they can be called, and monitoring. All these aspects are supported by Azure ML.

AI infrastructure for the UEFA project

For this project we used several Azure ML components:

- Datasets to work with and and collaborate on data

- Jupyter notebooks to experiment, using Python

- The designer, also for quick experimenting

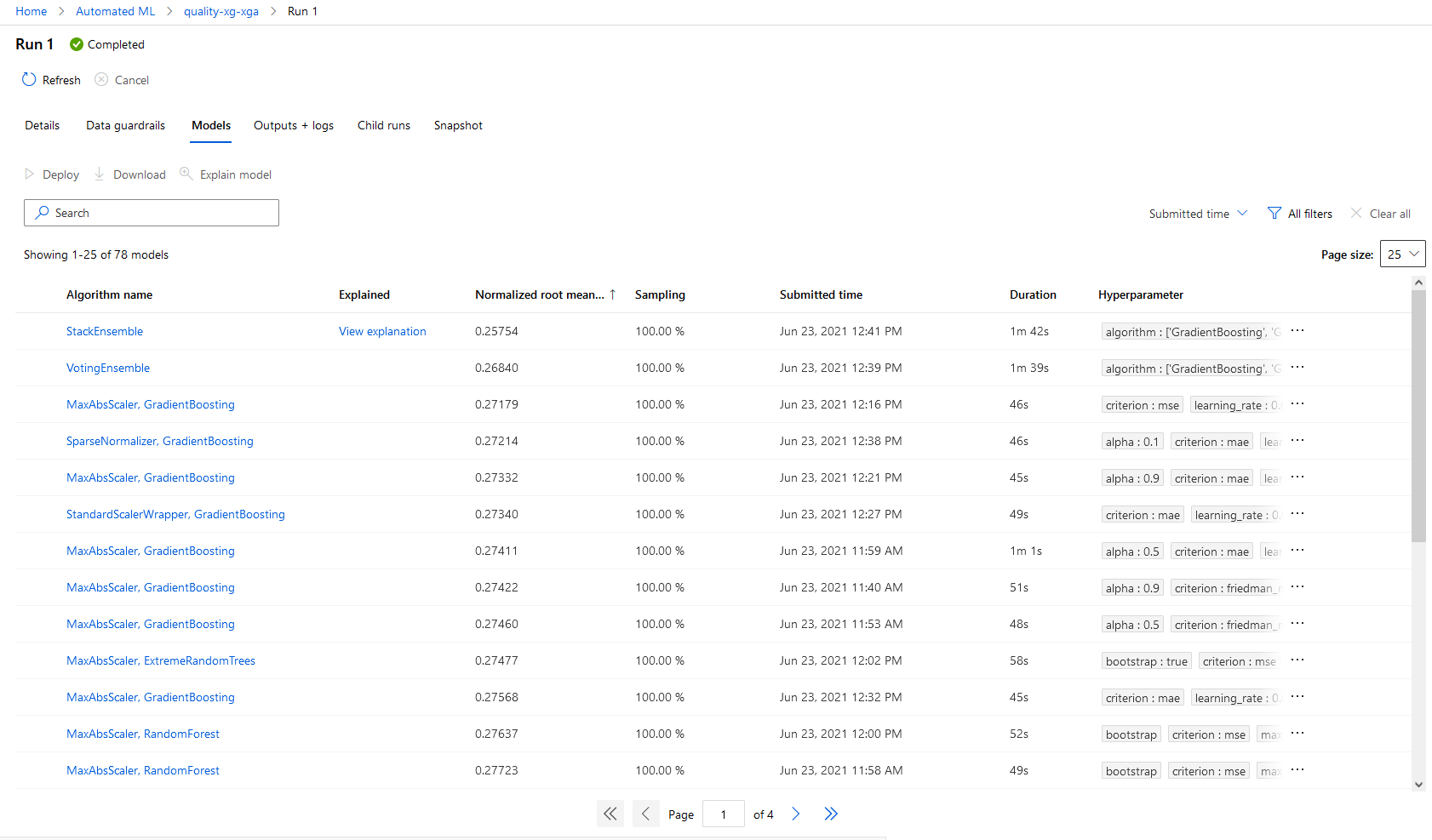

- AutoML to train models and select the best model

AutoML is a very handy feature for the novice AI worker, but it can also perform common tasks for the experienced worker. Let’s say you want to use AI to predict the outcome of a soccer game: win, lose, draw. This is a classification task. There are a lot of algorithms around. But which one gives best results? Trying them all can be cumbersome. Enter AutoML. You just provide the data, specify the parameters (teams, date, etc), what it should predict (game result) and Azure will try several algorithms and tells you which performs best.

There is a lot more to tell about this feature, but we will save that for a later blogpost.

Man vs Machine: First results

So what about the models. How good are they? Like said in a previous post, we created an application where each Coditer can enter their predictions, and see his/her score compared to the others. The two AI models also participate in this competition. Let us see how they are doing!

In our previous post, we mentioned that two approaches will be used:

- Using only historical data of previous matches (team history)

- Using all kind of performance parameters of the players (team quality)

For both approaches, we start with a simple classification model that just predicts win, lose or draw. If we just look at how good they are at predicting, we see the following results:

- History model: 22 out of 36 games

- Quality model : 19 out of 36

- Best human player: 22 out of 36

So our relative simple models are already outperforming most human players in this aspect! Our models are already quite decent (or people at Codit are very bad at predicting soccer games…)

What is next

In our next blogpost, we will dive deeper into the data used and the models. We will also shine some light on how to integrate machine learning models with other applications.

And of course, we are very interested in our model performance in the knock out phase. Can the machine beat the human? We will see!

Subscribe to our RSS feed