Also on day 2, our Codit Integrate 2019 elite has prepared a recap of all sessions.

Check it out below.

5 tips for production-ready Azure Functions – Thiago Almeida & Alex Karcher

Alex Karcher talked about some tips for production-ready Azure Functions. The most interesting tips were aimed at performance improvements of Azure Functions. Alex also provided some insights into how functions work behind the scenes and used this as a backdrop for explaining some of the tips and demos.

The 5 tips were around:

- Scaling Serverless APIs with HTTP

- Best practices around Event Stream Processing

- Event Hub Scale options

- Tips on CI/CD

- Tips around monitoring using Application Insights

The scale controller is a very important part of performance optimization, since it will automatically scale out resources based on the demanded workload. Take note that when you don’t enable pre-warmed instances, your new instances will start cold-booted, and you may not see the benefits of the automatic scale out when your Azure Function is very large.

Besides performance improvements, the most interesting tip Alex gave was about making use of Application Map in Application Insights, which provides a nice graphical overview of all related components in your integration. This will for instance help find a faulting component. The only requirement for making use of Application Map is to make sure Application Insights is enabled.

Finally, Alex showed some of the improvements around creating CI/CD pipelines in DevOps, however, he also showed the CLI updates which can create the pipelines for you so that you don’t manually have to configure all the pipeline stages and tasks. More details can be found here: https://aka.ms/Functions-azure-devops

API Management: deep dive – Part 1: Miao Jiang

Miao Jiang walked us through the first part of the API Management deep dive session. He started the session by listing challenges in the deployment process. Challenges addressed were API deployment automation, configuration management for various environments, and the conflicts related to sources due to bigger teams.

He gave a promising demo of CI/CD pipeline for the API Build and Release management.

Generating ARM templates has always been an issue, but not anymore????. Miao presented some cool tools that Microsoft built for generating the ARM templates. New tools like Extractor and Creator can be used to generate the ARM templates by reading the current API configurations. These are open source tools available in GitHub.

He suggested using Master templates which uses individual API templates internally. Master templates combine multiple API templates and could be used to deploy all APIs together. Single service templates could be used to release single APIs.

Some Key Takeaways from the session for API release and deployment are listed below:

- Use separate service instances for environments

- Developer or Consumption tiers are good choices for pre-production

- Template based approach is recommended

- Modularizing templates provide a wide degree of flexibility for Access Control, Governance & granular deployments.

In general, this session was helpful a satisfactory for the audience who were concerned about current complex deployment procedure.

Event Grid update – Ashish Chhabria/Bahram Banisadr

Bahram Banisadr started the session by introducing some core concepts regarding Event Grid. He explains that in the core it is a pub/sub system to which event sources broadcast events, and event handlers, or sinks, consume and process those events from.

On an architectural note, he highlights that event sources are completely unaware and do not care about who receives the events. On the other hand, the event handlers need to be aware of event sources to determine what events are of interest. In the end, the event handlers are responsible for processing the events and turning them into meaningful actions.

Bahram emphasizes a specific anti-pattern by stating that you should always provide full context (not full data) with an event to allow the event handler to determine what to do next and prevent an unnecessary API call as a step to determine the next step in handling the event. He also clearly states that Event Grid is not meant for use as an asynchronous request/response or a log or ledger infrastructure.

Then, addressing the session’s title, he introduces Azure Maps, a new type of IoT event, and Service Bus as an event handler. Currently, only Service bus Queue is supported and he invites all to check out this new feature in preview and provide feedback.

Other improvements mentioned are:

1 MB Events (Preview)

- Events over 64 kb will cost you extra though.

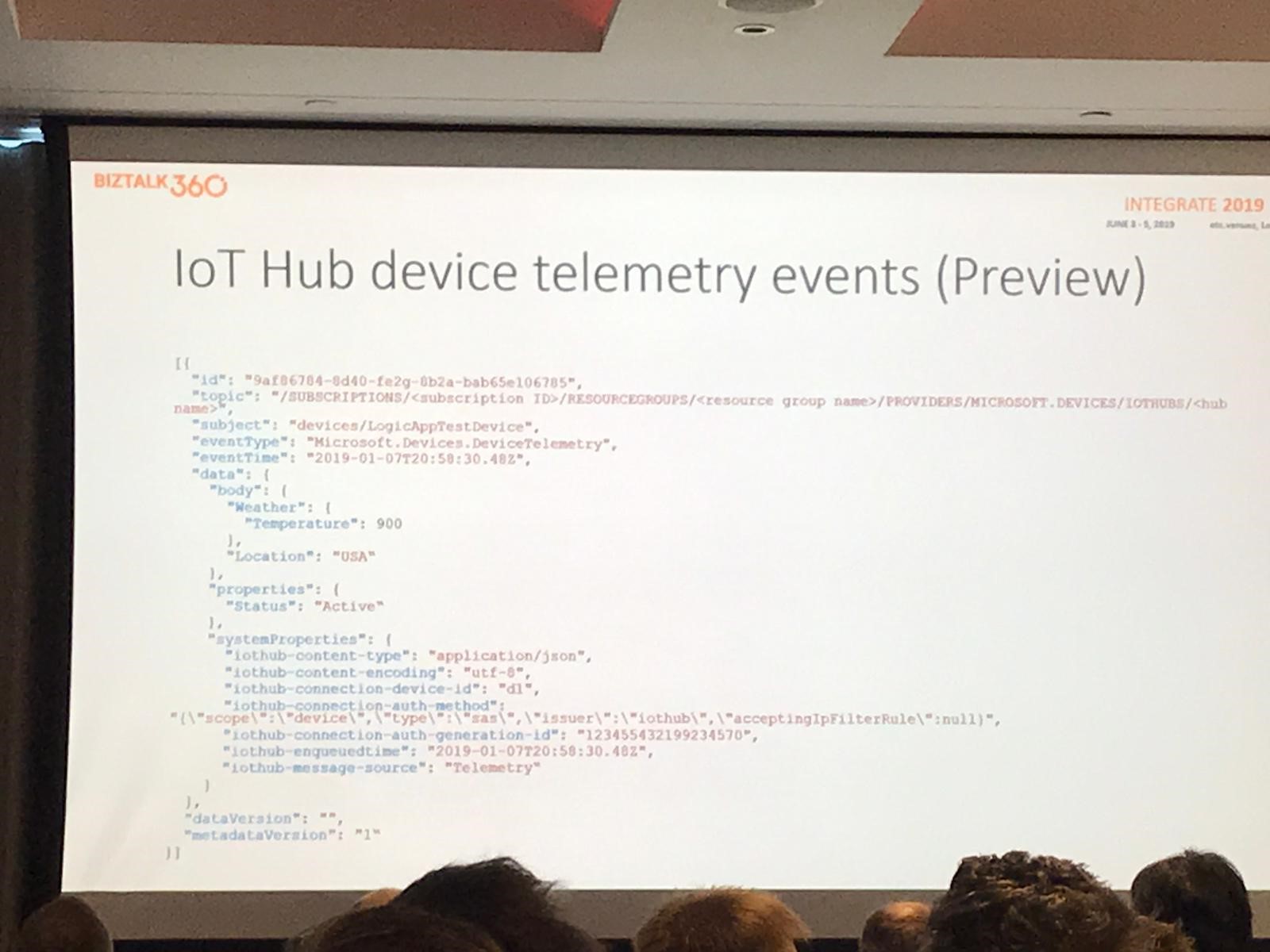

IoT Hub device telemetry events (Preview)

Although this is not meant for replacing event patterns to IoT hub it is a ‘pretty robust’ addition for use cases where advanced filtering on events is required before processing.

GeoDR (GA)

Now all event grid instances are guarded against metadata loss and service outage by default at no extra cost providing Disaster Recovery with the following Service Level Agreement (SLA):

- Metadata RPO: Zero minutes of topics & subscription lot

- Metadata RTO: 60 minutes till new CRUD operations

- Data RPO: Five minutes of events jeopardized

- Data RTO: 60 minutes for new traffic to flow

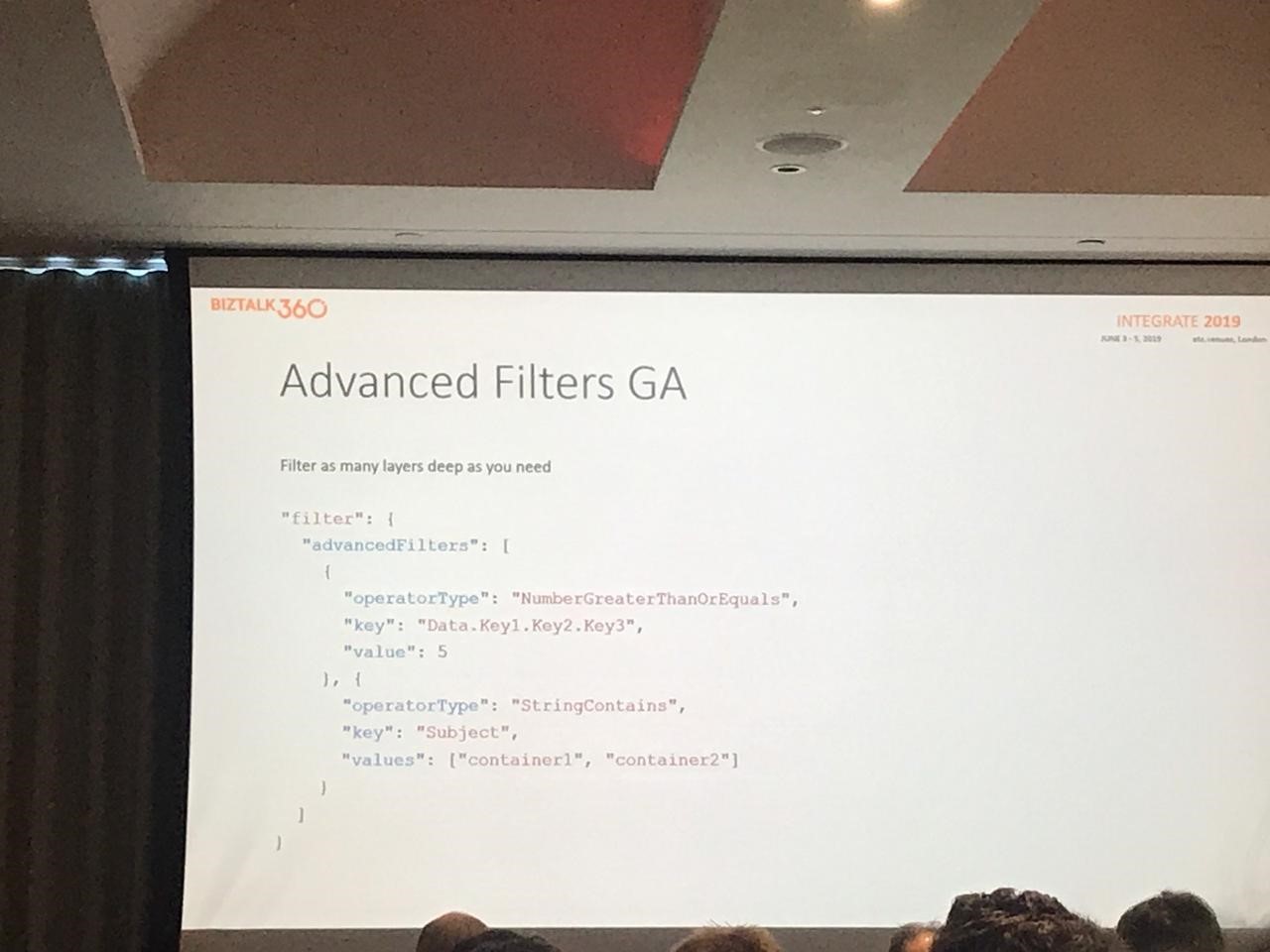

Advanced filters (GA)

Filters as many layers deep as you need by dividing AND groups by OR statements.

Event Domains (GA)

Event domains are a management construct allowing you to manage all your topics in one place, set fine-grained authorization rules for each topic and publish all of your events to one endpoint. This simplifies governance, especially when separating business or intern/external domains.

A subscription supports up to 100 domains which each can hold up to 100000 topics.

Additionally, up to 50 firehose event subscriptions are allowed.

Bahram concludes with a short term roadmap for Event Grid, including:

- Remove workarounds currently needed in, for instance, working with VNET

- Providing increased transparency to allow for better visibility if things go wrong.

- Support for Cloudevents.IO

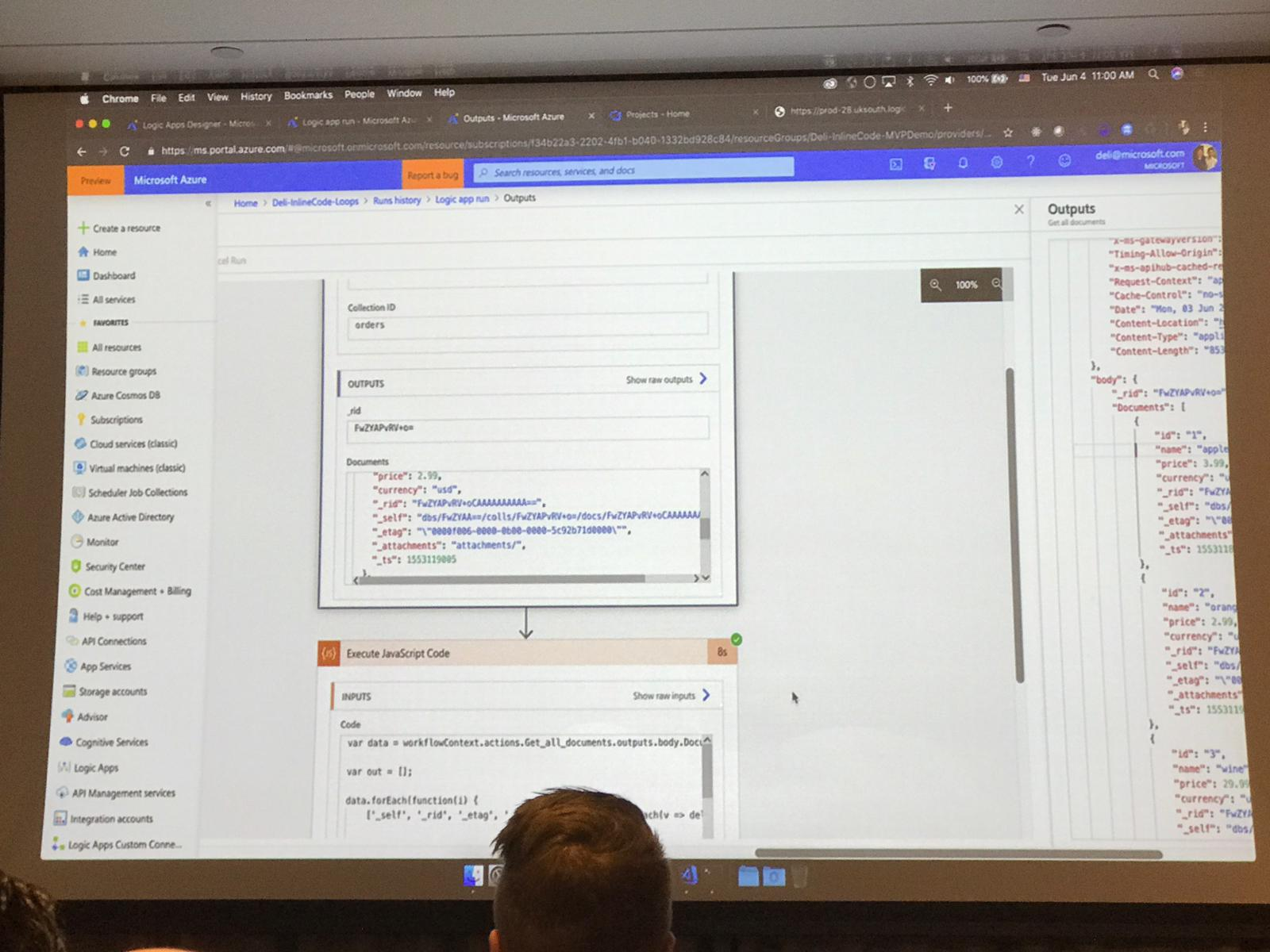

Hacking Logic Apps – Derek Li

There is a new action for inline code in Logic Apps, that is currently in public preview. The language is JavaScript, but more languages will be added in the future (PowerShell, C#). It is intended for simple tasks as Function Apps are still useful for more complex tasks.

Demos were given about HTML formatting and message manipulation/cleanup.

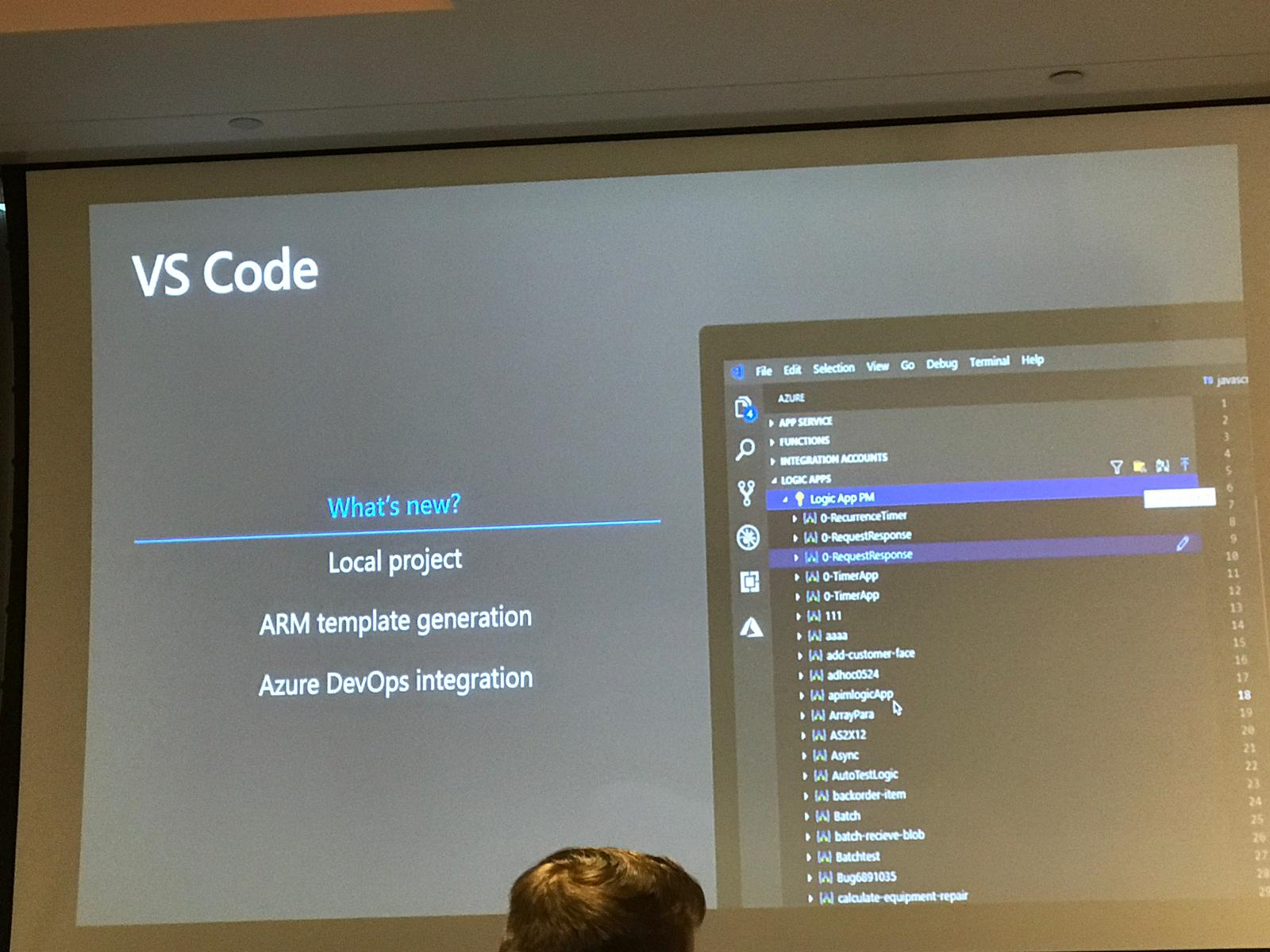

Improved Logic App extension for VS Code with support for local projects. This also supports ARM template generation including a YAML pipeline definition. The VS Code extension is available as open source on GitHub.

Other new features:

- Condition in trigger instead of doing it right after the trigger saves money and gives a cleaner run history.

- Run against older versions allows a gradual rollout of the new Logic App (via API Management)

- Sliding windows trigger

API Management: deep dive – Part 2 – Mike Budzynski

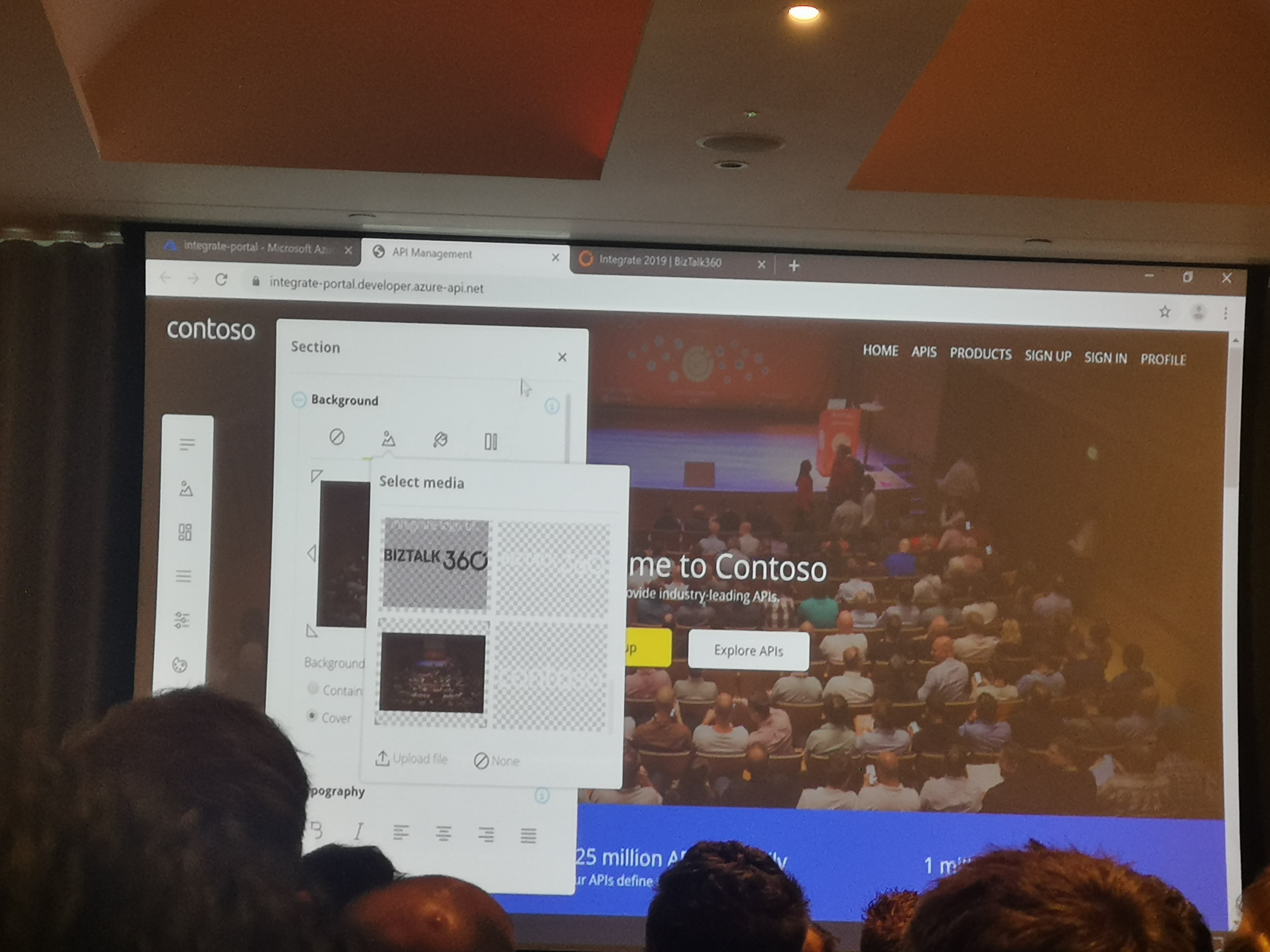

Next up was Mike Budzynski, with the 2nd part of the API Management deep dive. He introduced us to a brand new, improved and open-sourced API Management Developer Portal.

After some researches, gathered from API providers (e.g. designers, marketing, etc.) and API consumers (e.g. developers, decision-makers, etc. ), the portal was decisively built from scratch, so that it could meet all the requirements. The newly build API Portal uses JAMstack (JavaScript, APIs and Markup). As a result, it has better performance, higher security, easier scalability and reduced customization time.

Features:

- Available in your instance name.

- Self-hosted and extensible.

- DevOps friendly, enabling automatic deployment.

- Stored within a Storage Blob

Moreover, Mike demonstrated how this new Developer Portal is super customizable. After just few clicks he managed to change the whole layout of the portal. The following picture shows the customizations he did on the go!

Additionally, he provided an example to connect an API operation with another environment, by just changing a few code lines.

For more information:

https://aka.ms/apimdevportal

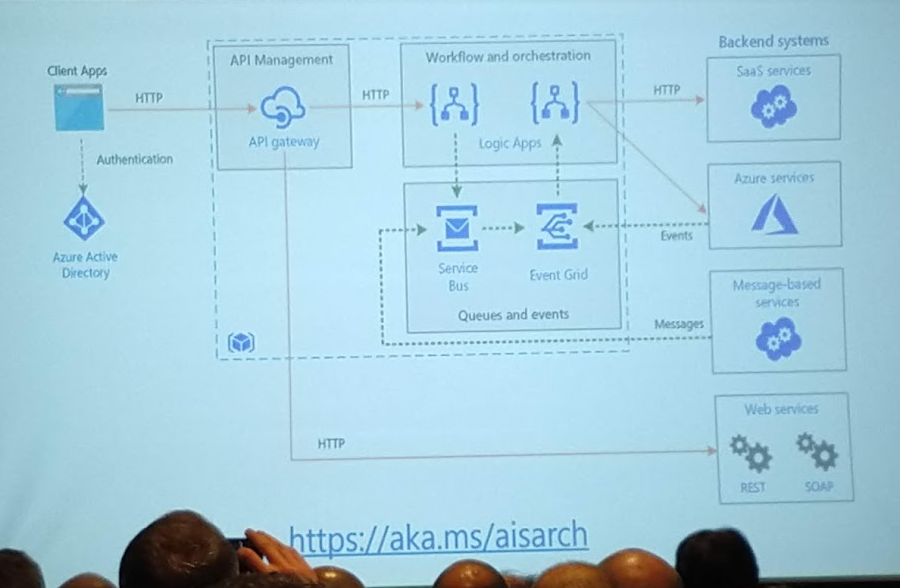

Making Azure Integration Services Real – Matthew Farmer

In this session, we learned some best practices in project and architectural approach for real integration solutions with Azure Integration Services. As integration is key to digital transformation, Gartner predicts that by the year 2022, 65% of large enterprises will have a hybrid integration platform.

It is important for designers to understand the key principles, such as an API-first approach, the orchestration of systems, message stores, and the event-driven model.

A somewhat controversial statement was that organizations should not set cost savings as the primary goal for an integration solution, but should choose strategy over tactics and value over cost. We tend to agree with this as cost saving will mostly be one of the results of an integration solution, but the added value by connecting systems will be much higher than the cost savings.

It’s also important for organizations to find the right balance in governance. Traditional large enterprises might over-govern, while governance might be taken too lightly in typical proofs-of-concept and minimal viable products, that customers are building. Codit believes the most important aspect in an integration solution for this, is to have the entire DevOps automation set up in a good way. This helps in making things more agile and reliable.

And last but not least, the message from Mathew was clear in convincing BizTalk customers to have a migration plan to Azure prepared. Most BizTalk artifacts can be imported into the Logic Apps Integration Account (schemas, maps, and EDI agreements) and orchestrations and pipelines should typically be redesigned as Logic Apps. It is, however, important to embrace new concepts that bring an advantage: connectors, API economy, serverless and reactive scale. The cloud paradigm requires a different approach than BizTalk integration does.

Some interesting references:

- Reference architectures https://aka.ms/aisarch

- White paper on Microsoft integration: https://aka.ms/integrationpaper

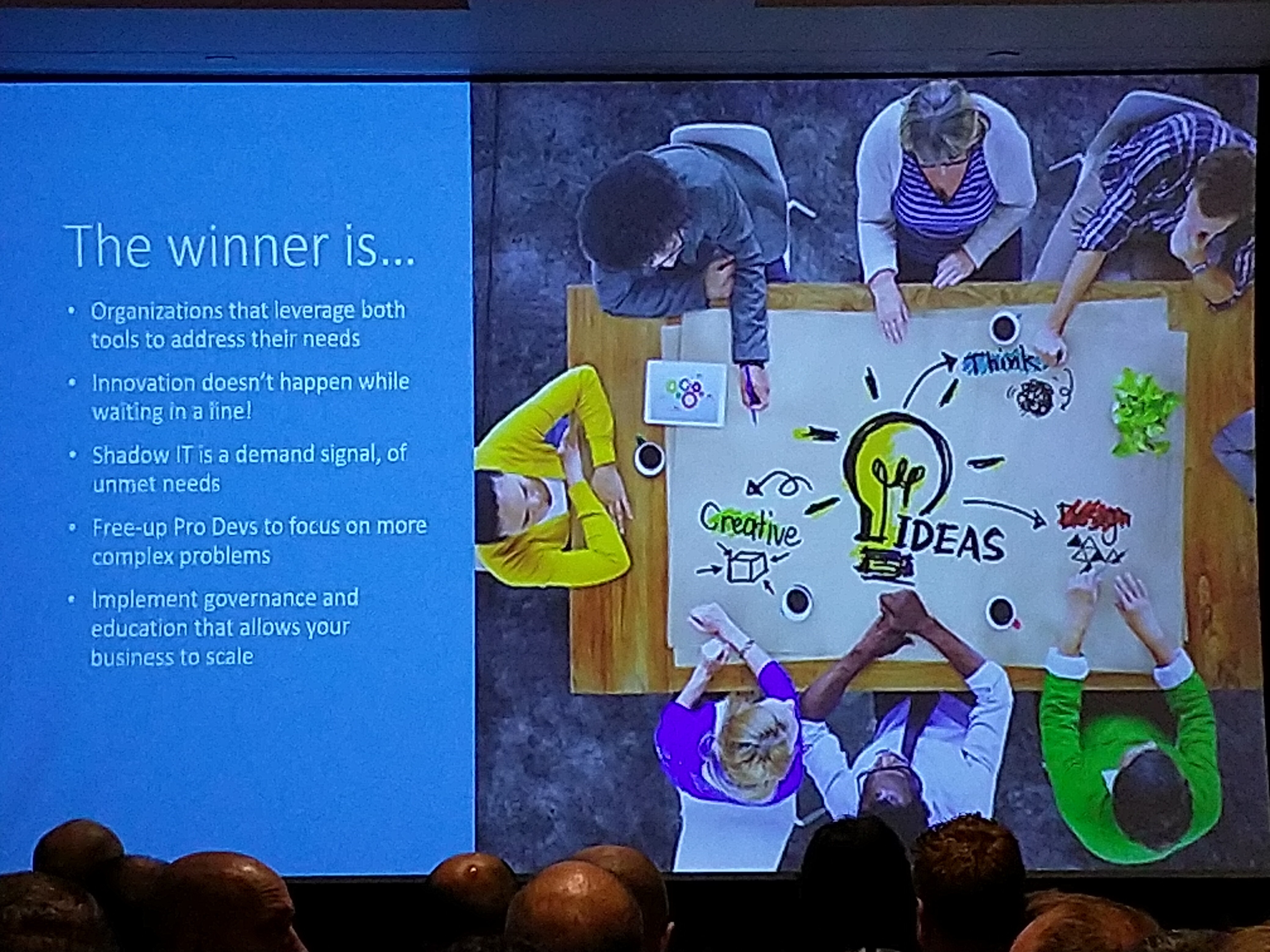

Azure Logic Apps vs Microsoft vs Microsoft Flow, why not both? – Kent Weare

Kent Weare talked about the differences between Microsoft Flow and Azure Logic Apps.

Flow provides a lot of templates and connectors which are provided by Microsoft or other parties. Besides that, flow puts an emphasis on non-complex use cases. A big feature of Flow is the concept of approvals, which enables you to start or continue a flow.

Logic Apps makes complex integration scenarios involving Integration Accounts, sensitive data, predefined XML or flat file schemas and maps easier. Furthermore, Logic Apps provide custom connectors, out-of-the-box monitoring, VNet connectivity, CI/CD and more.

It is not so much a decision you have to make between the two tools. The tool is less of a concern, the outcome is the most important goal. The level of complexity mostly decides which tool to use for the type of job.

What’s there & what’s coming BizTalk360 & Serverless360 – Saravana Kumar

Saravana started with a description of Kovai’s (BizTalk360’s new company name) products: Serverless360 and AtomicScope. He pointed out the challenges that can be solved by the products and the capabilities which are not available in Azure Portal.

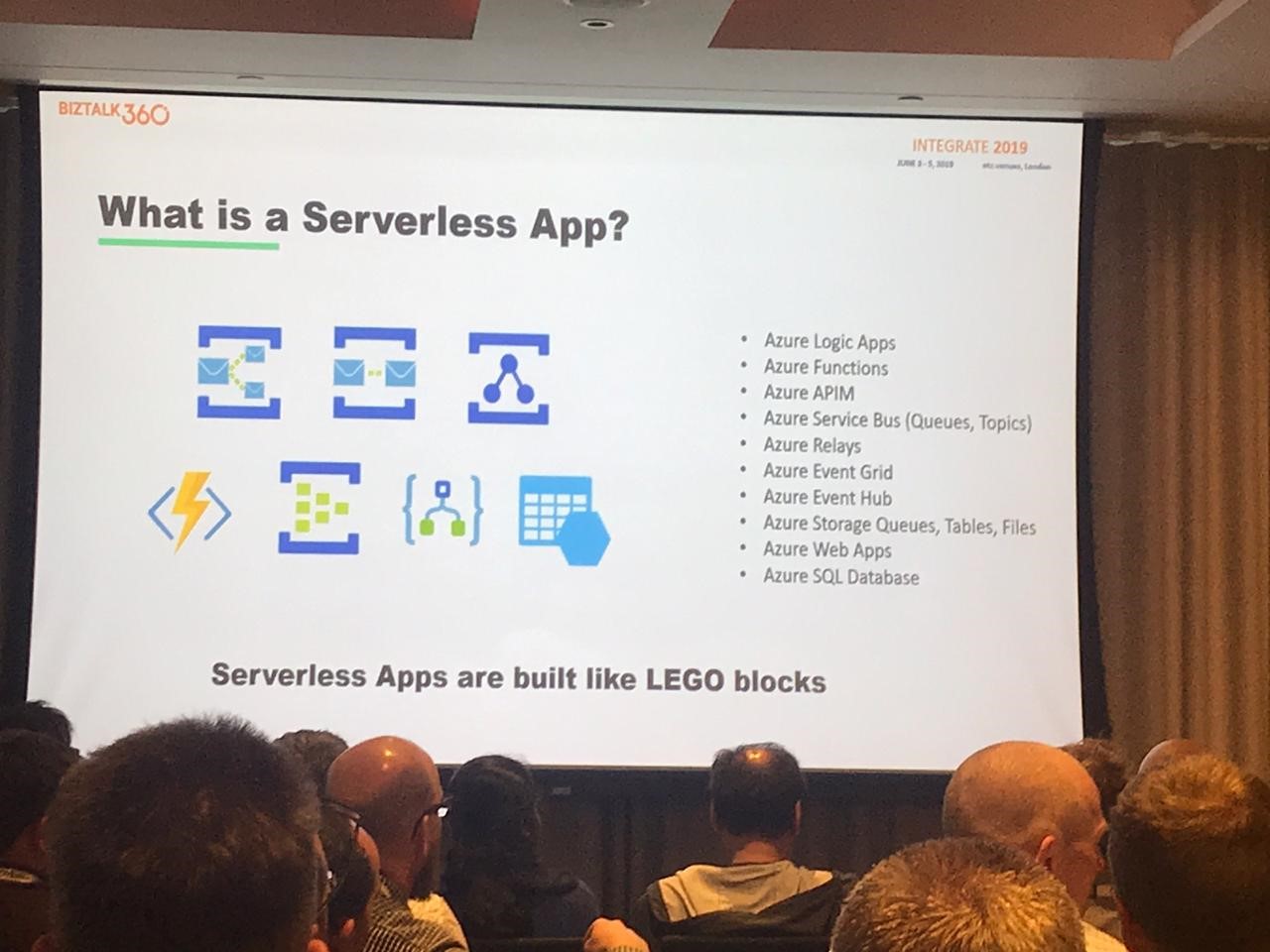

What is a serverless app?

Building Serverless applications is like building lego blocks. These reusable blocks include API Management, Storage, Functions, Logic Apps, etc

Challenges/problems of Azure Portal:

- No visibility and hard to manage

- Complex to diagnose and troubleshoot

- Hard to secure and monitor

- Support is very critical. Its the same with On-prem service as well as Azure service.

Solutions:

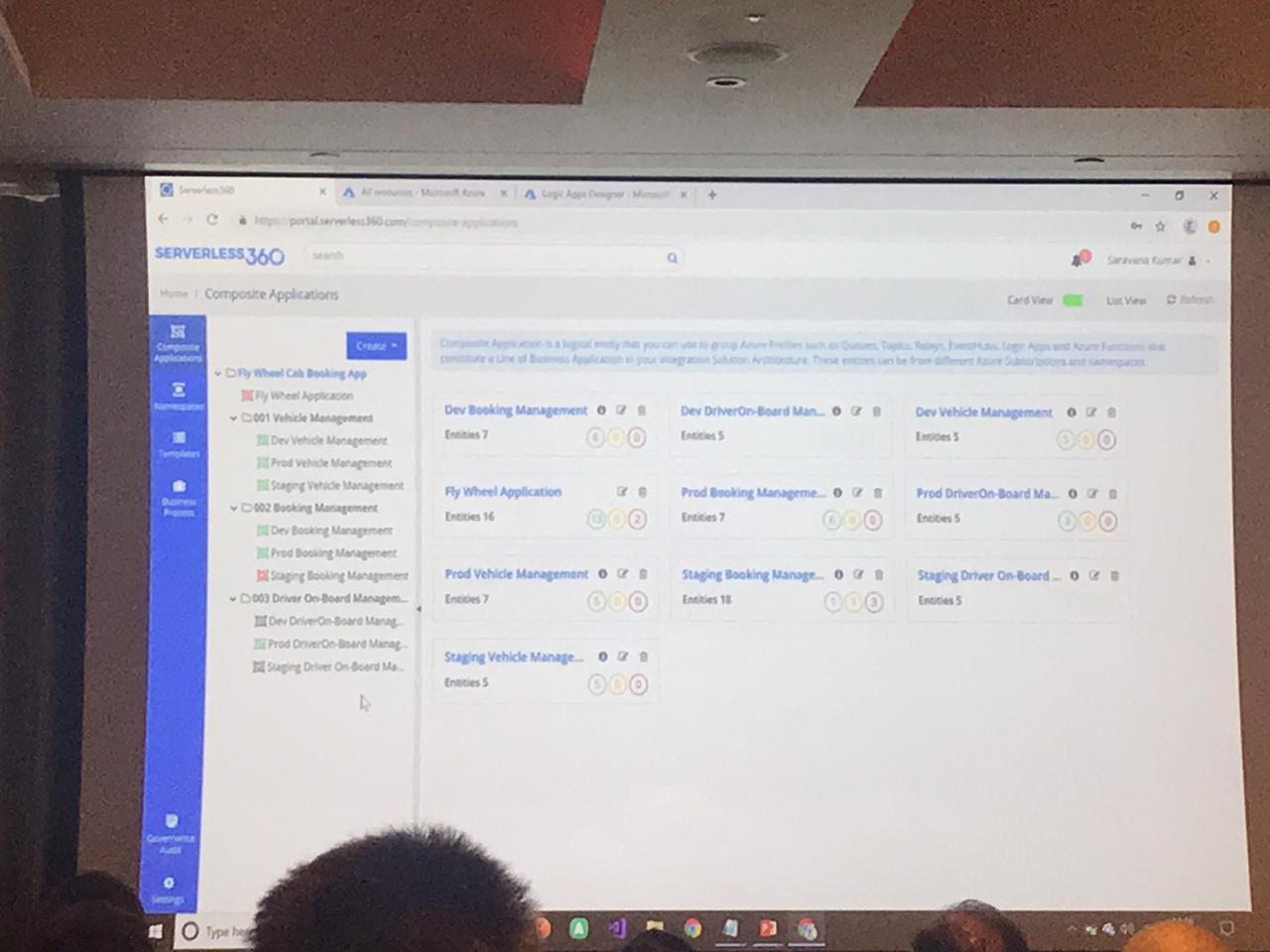

- Composite Applications and Hierarchical Grouping

- Service Map to understand the architecture

- Security & Monitoring under the context of application

Saravana then went on to show a demo of Serverless360, with:

- View different serverless applications

- Different views

- User management

- Different types of monitoring

- Autocorrect

Serverless360 is the replacement for the Azure Portal to easily support the applications in an aligned way.

It helps to improve your day-to-day operations with respect to support applications with:

- Granular user control, which allows you to provide or remove access for an individual

- Capabilities like Autocorrect

Devops Improvement(Automate Everything):

- It’s not actually like CI/CD, but it’s like automation and improvement

- Templated entity creation

- Auto-process leftover message

- Auto-process dead letter messages

- Remove storage blobs on condition

- Replicate QA to production

- Detect & Autocorrect entity states

It is possible to get messages from the queue or dead letter queue. This is not present by default in the Azure portal. So normally you would have to download the service bus explorer for this.

It also helps to make it easy to import artifacts like queues and topics from one environment to another environment.

Where is my message? (Driver validation, PO, Booking Status)

Atomic Scope allows you to track messages using BAM (or another database), which can really be helpful for a support team.

BAM(End to end tracking)

- Message track using top to bottom flow

- Build new connector for start and stop activity for BAM tracking

Customer Scenarios:

- Ensure 15k client devices are active(using data monitoring)

- Monitoring solutions specific to different teams (composite application+monitoring)

- Auto reschedule doctors appointments(using dead letter processing)

DEMO:

- Templates: properties, Service bus queue created based on template

- Retrieve messages from queue: get messages, repair and resubmit

- Active message processing

- Purging dead letter

Monitoring Cloud- and Hybrid Integration Solution Challenges – Steef-Jan Wiggers

Steef-Jan gave a good presentation on some of the challenges of monitoring Cloud (and Hybrid) Integration Solutions. He started out by setting the scene, providing a brief overviews of a number of real world Cloud native (and Hybrid) Integration Solutions (scenarios like Order Processing, ML and AI scenarios). With these scenarios in mind, Steef-Jan moved on to exploring some of the challenges of monitoring solutions in these scenarios.

The challenges, however, aren’t always technical and Steef-Jan described three areas which are often overlooked but do tend to form challenges. Namely: People, Processes and Products.

Using the area of People as an example, it’s the organizational structure, support model and skill levels of the teams involved that have a huge impact on a team’s effectiveness in being able to monitor a solution. With well thought out processes and training, however many of these challenges can be addressed.

Steef-Jan proceeded to review some of the monitoring products available on the market and some of the keys strengths of each product. He discussed products such as Serverless360, BizTalk360, Atomic Scope and Codits very own Invictus Framework amongst others.

He also went back to the scenarios and mapped the products onto the scenario to illustrate where each product will best fit each scenario, especially paying careful attention to differences between Functional monitoring and Technical monitoring. Functional monitoring here meaning tracking messages through processes (for example: did purchase order with id #1234 reach the intended destination, think BAM) compared to technical monitoring of the status of a component.

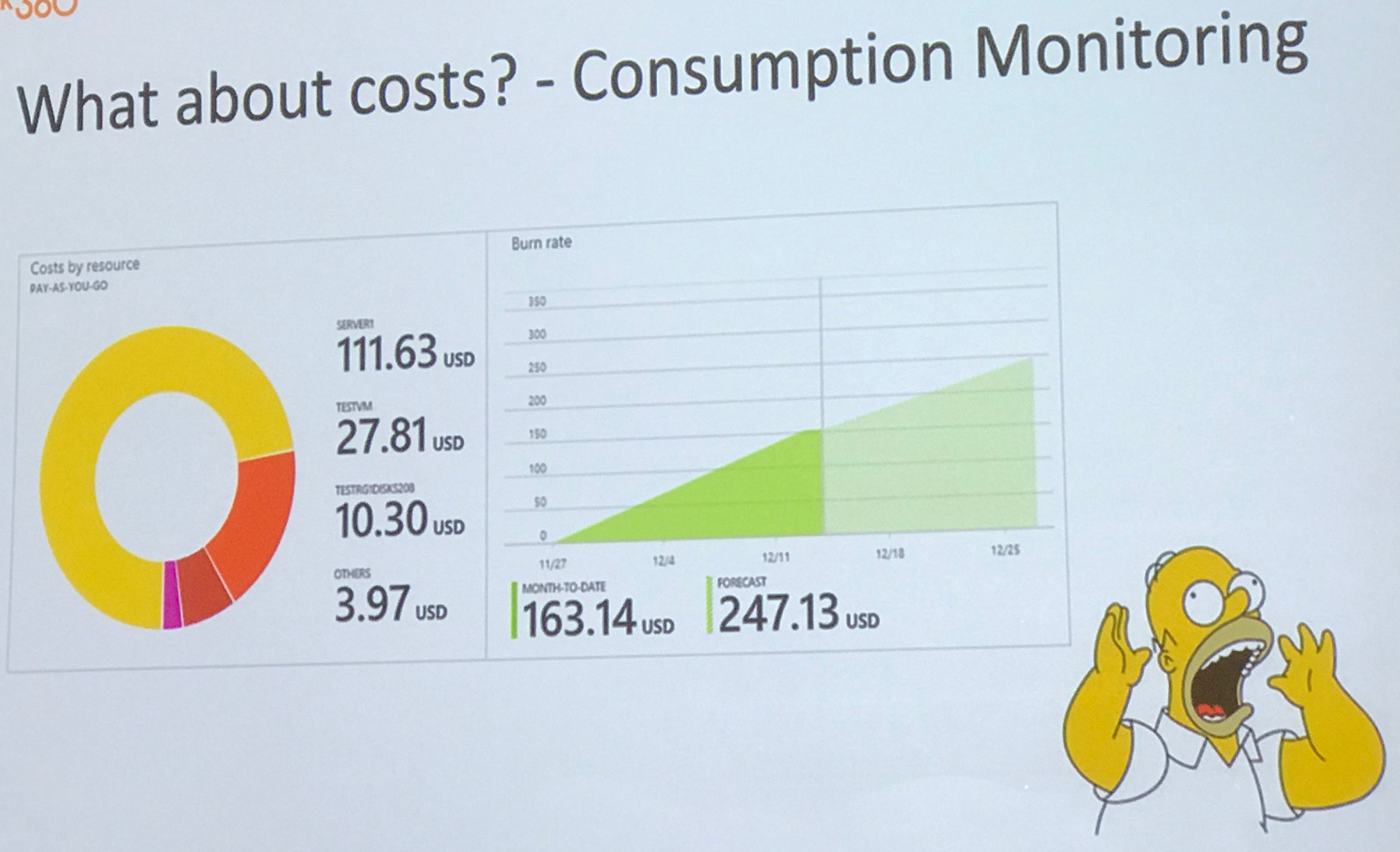

The best tip of all and a key takeaway from this presentation is to monitor your cost consumption to avoid nasty surprises later.

Modernizing Integrations – Richard Seroter

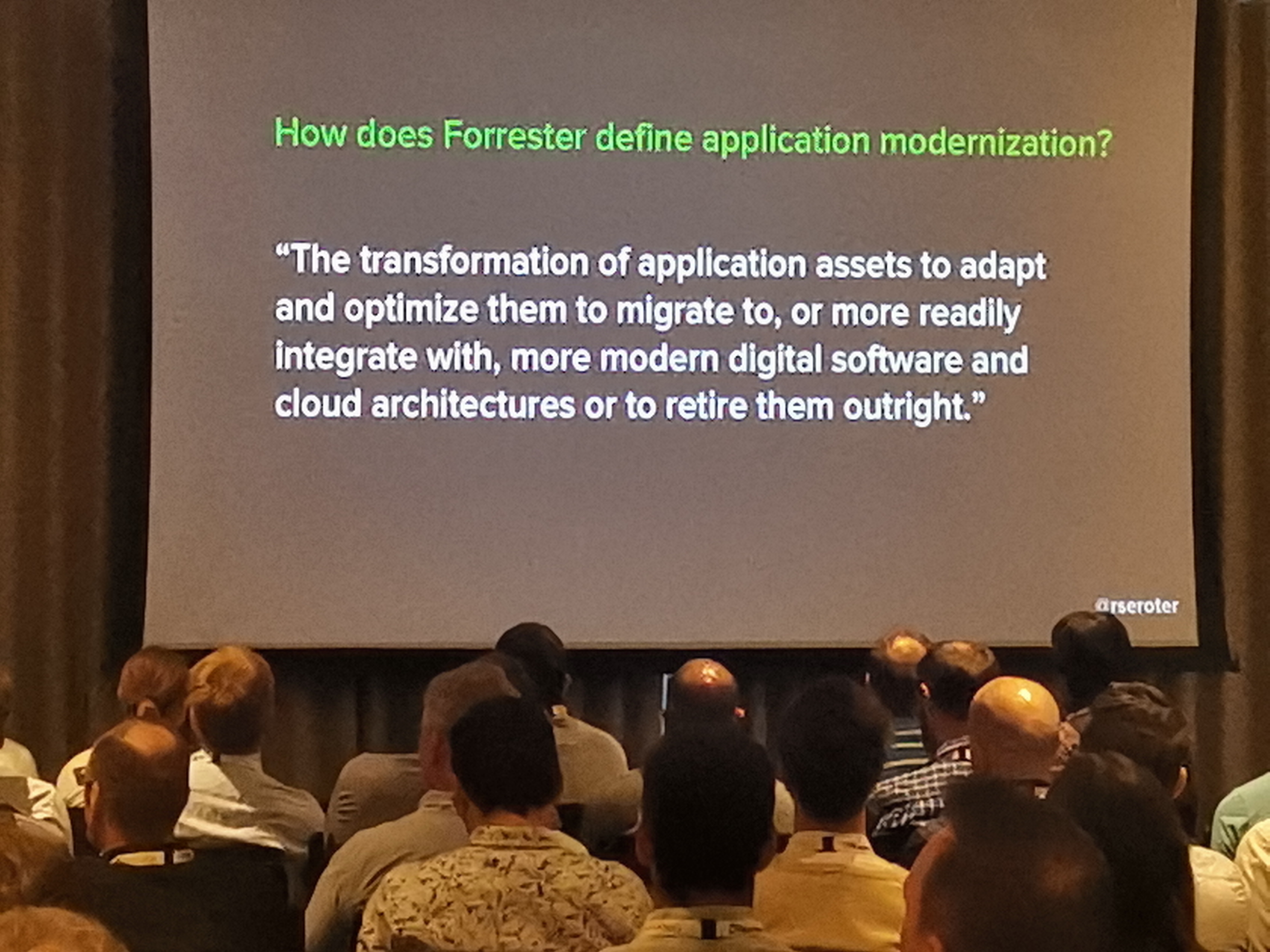

In this session Richard Seroter talked about modernizing BizTalk Server (BTS) regarding cloud-based hybrid connection integrations.

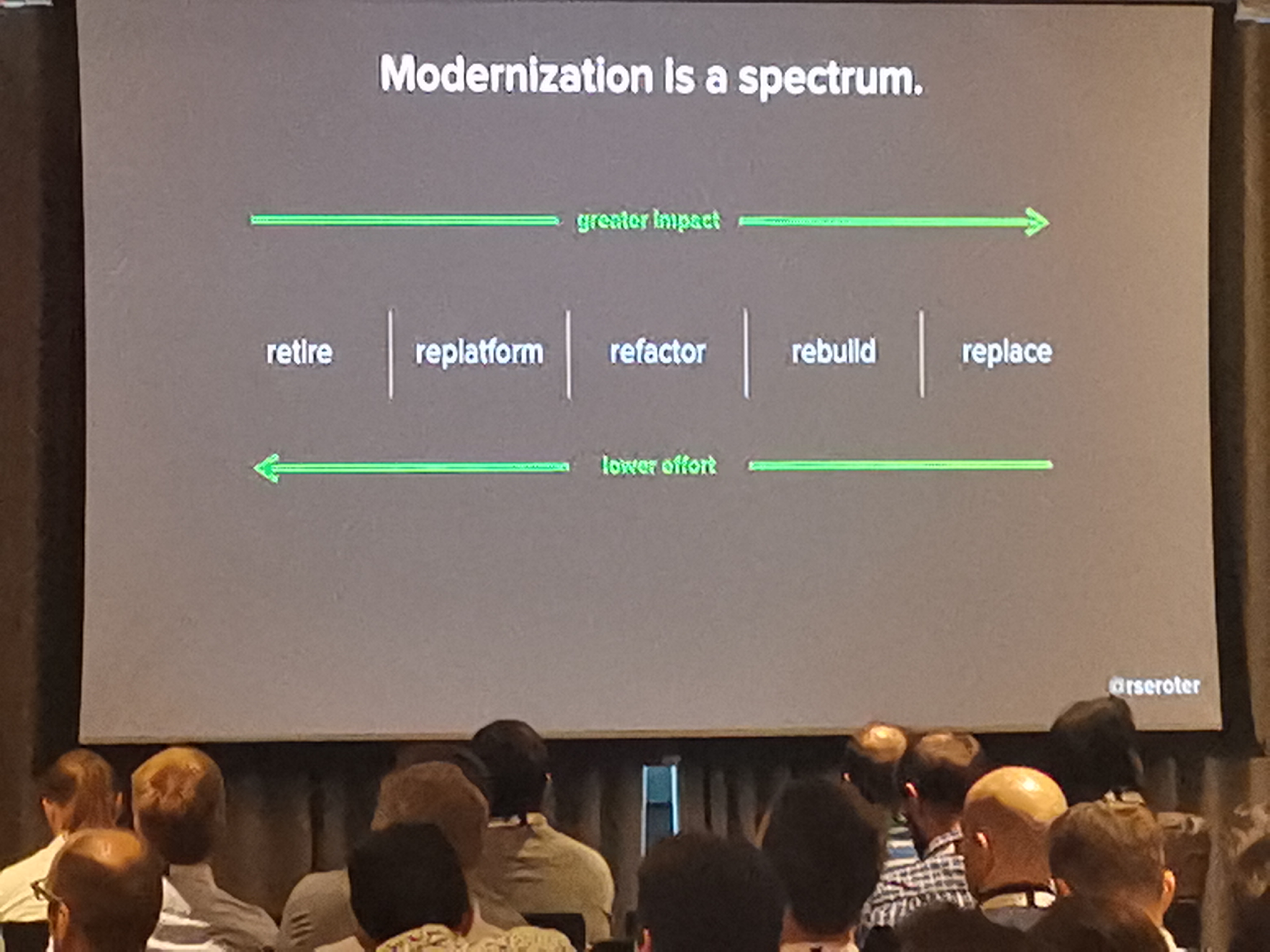

Modernization can be seen as a spectrum by which systems can be retired (lowest complexity), replatformed, refectored, rebuild and/or replaced (highest complexity).

There are some considerations regarding modernization integration:

- Think about system maturity

- Unlearn what you know

- Introduce new components

- New endpoints and users

- Auditing skill

- Interaction patterns

- Automatic deployment

- Choose the host

- Monitoring

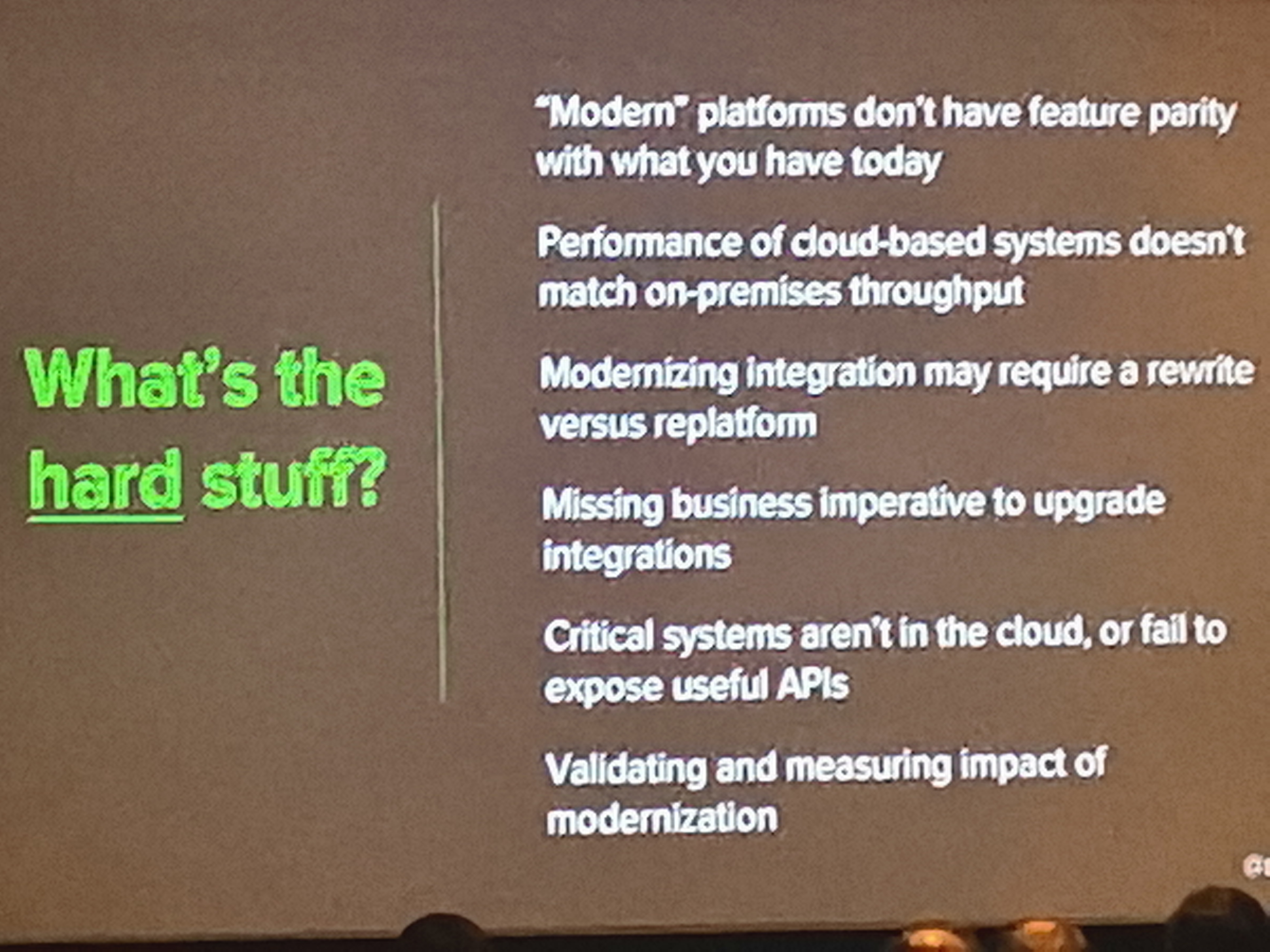

Although these considerations can propose challenges such as:

- You might not have feature parity

- Performance might be reduced

- How to provide proof of a business case to prove throughput

- Critical systems might not have the right requirements

- Validating and measuring impact

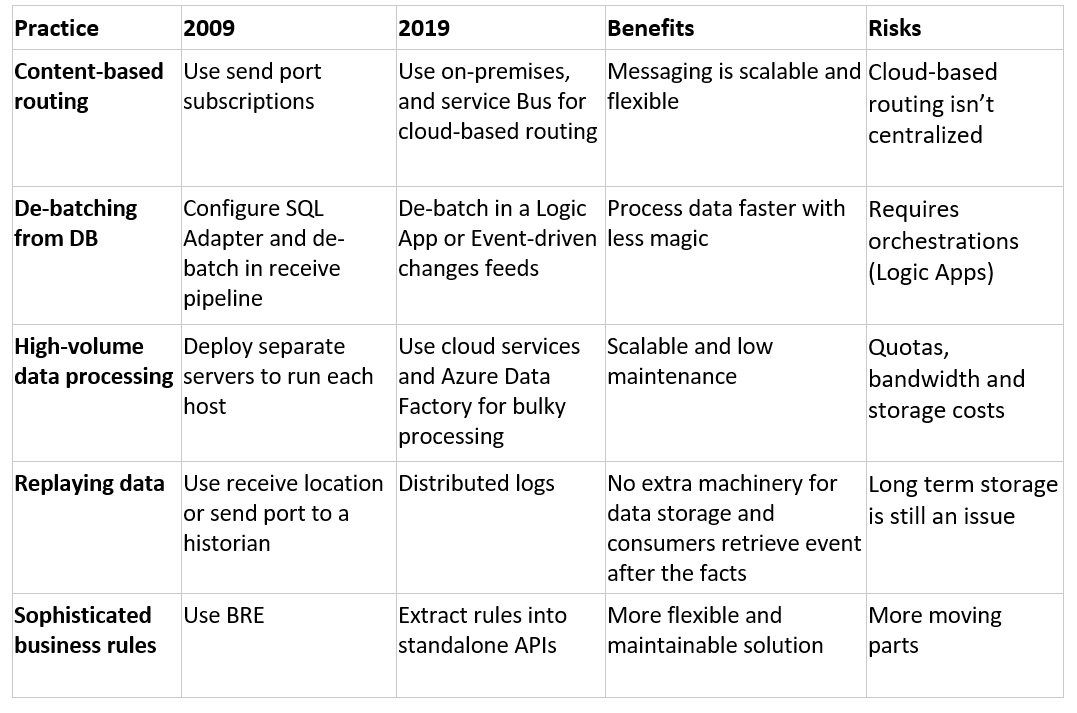

Richard Seroter also enriched us with some best practices, here are a few examples:

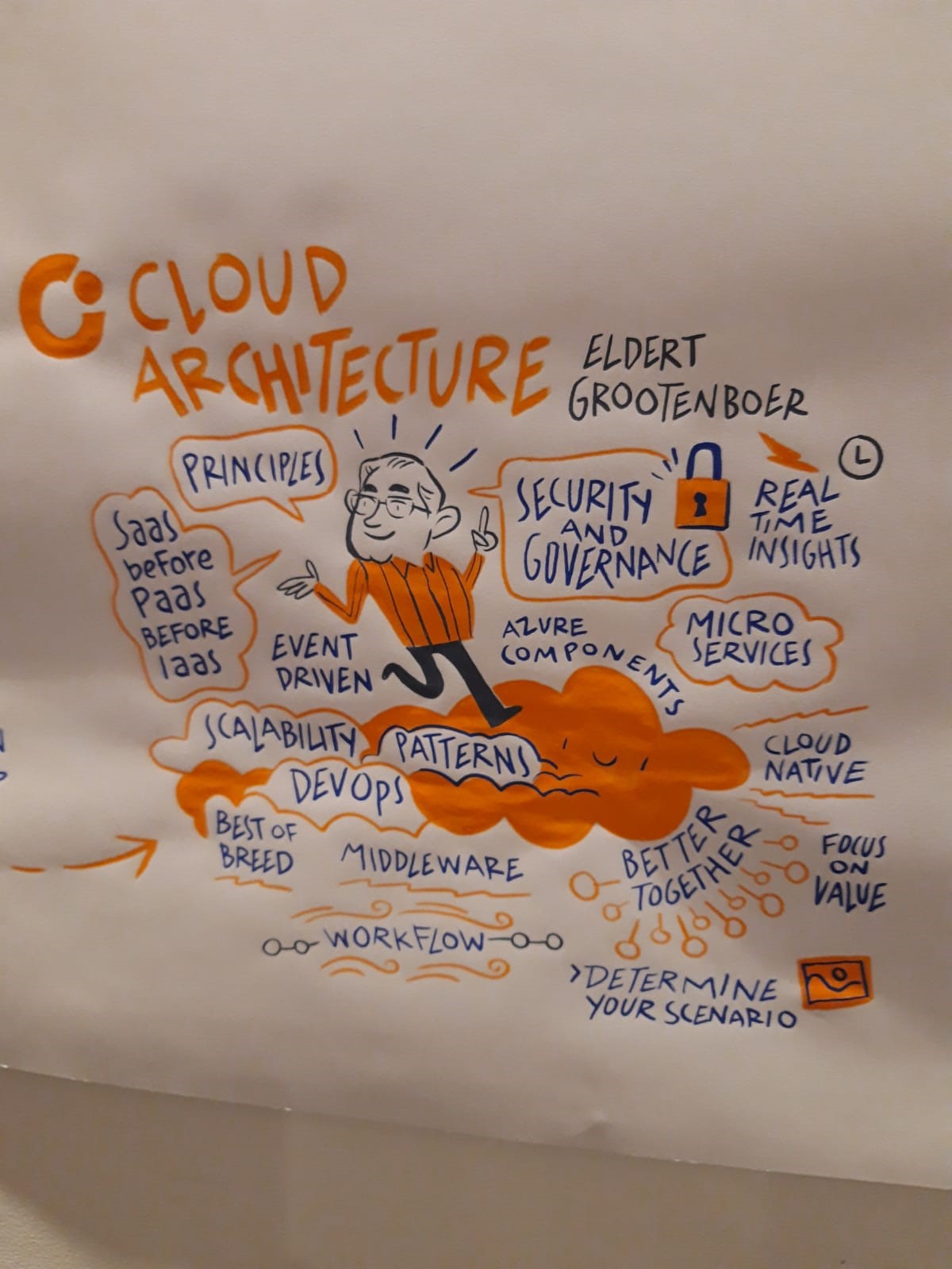

Cloud architecture recipes for the Enterprise – Eldert Grootenboer

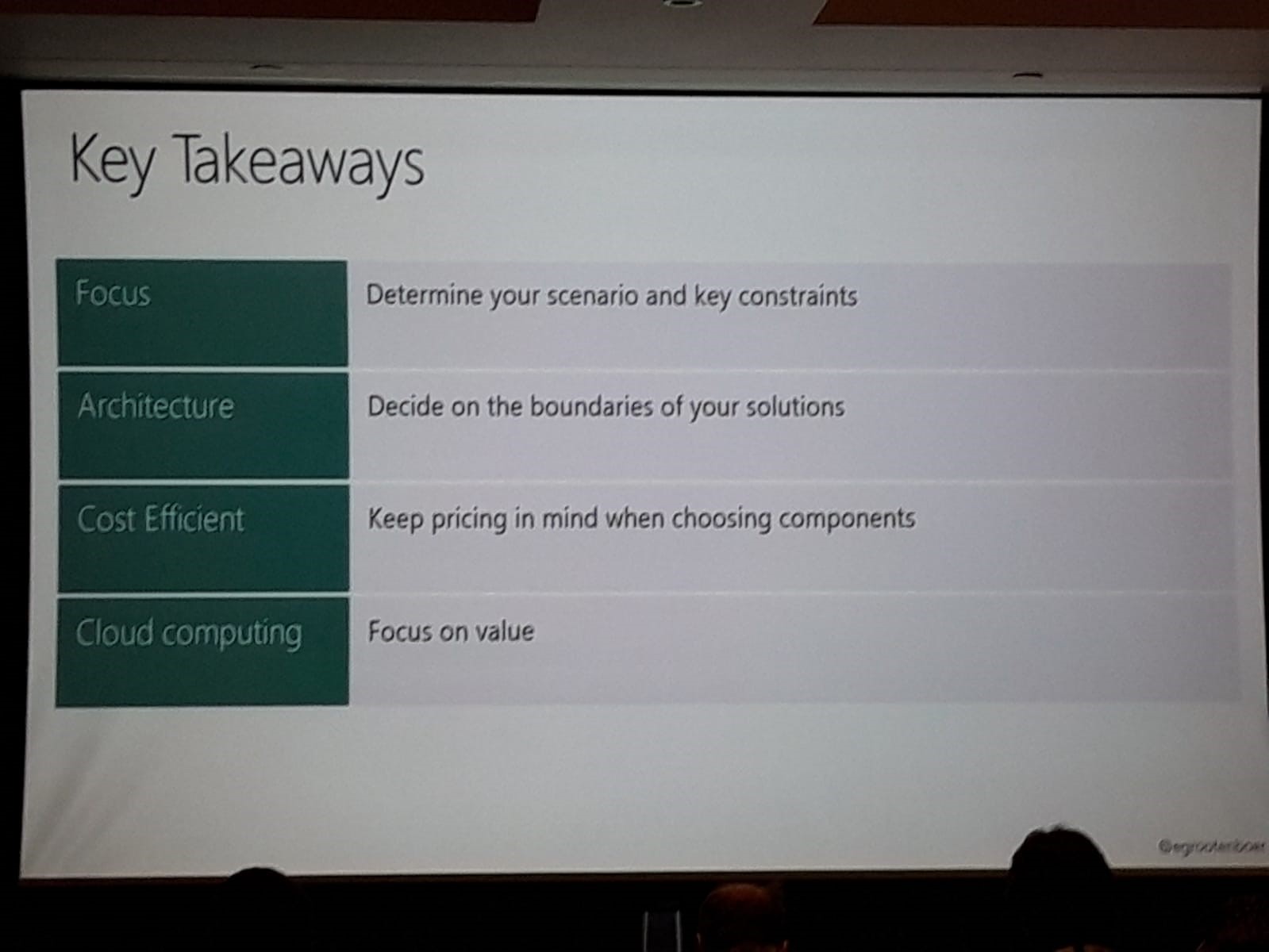

In this session, an overview of common and well-known best practices was given.

Eldert iterated on the architecture principles that we have been applying since the early 2000s (such as Loose coupling, Integration patterns, pub-sub, and monitoring) and added a few cloud-specific principles, such as scalability and DevOps. We fully agree with the fact that these principles should be set first, before starting the actual development.

Another practice we indeed see quite commonly used by enterprises is to choose SaaS (consume) over PaaS (build) over IaaS (host). This will deliver value much faster and reduce the cost of maintenance and building. That’s why we believe that customers should only build what differentiates them from their competition.

After giving a quick overview of the various Azure services for Serverless, integration, and development, several architectural diagrams were shown where serverless was being used in Microservices, Workflow and other solutions. An important learning is indeed to stick to technologies that are known and only add new technologies when you need them, not when you can.

Thank you for reading our blog post, feel free to comment or give us feedback in person.

Bart Defoort, Carlo Garcia-Mier, Charles Storm, Erwin Kramer, Francis Defauw, Gajanan Kotgire, Jonathan Gurevich, Kapil Baj, Martin Peters, Miguel Keijers, Nisha Pillai, Peter Brouwer, Sam Vanhoutte, Serge Verborgh, Sjoerd van Lochem, Steef-Jan Wiggers and Tim Lewis

Subscribe to our RSS feed